Most security teams can now answer: “Where does our sensitive data live?”

Far fewer can confidently answer: “Who can access it right now and how will that change in the next hour?”

That gap between knowing where your data is and knowing who can reach it under what conditions is what cloud data access governance is designed to close. And in 2026, with cloud data estates sprawling across dozens of accounts, AI agents processing sensitive workloads, and identity-based attacks accounting for the majority of cloud breaches, that gap is no longer a theoretical risk. It’s an operational emergency waiting to happen.

This guide is written for security architects, cloud security engineers, and data security leaders who already understand IAM, DSPM, and basic cloud security controls—and are ready for the practical, implementation-level guidance on how to make data access governance actually work across complex, multi-cloud environments.

Why Managing Cloud Data Access Is So Hard

Cloud data access feels like a solvable problem. You have IAM. You have policies. You have role assignments. And yet, organizations consistently find themselves exposed—not because they lack tools, but because those tools were never designed to answer data-level access questions at the scale and speed cloud environments demand.

Here’s what’s actually driving the challenge:

Identity Sprawl at Machine Scale

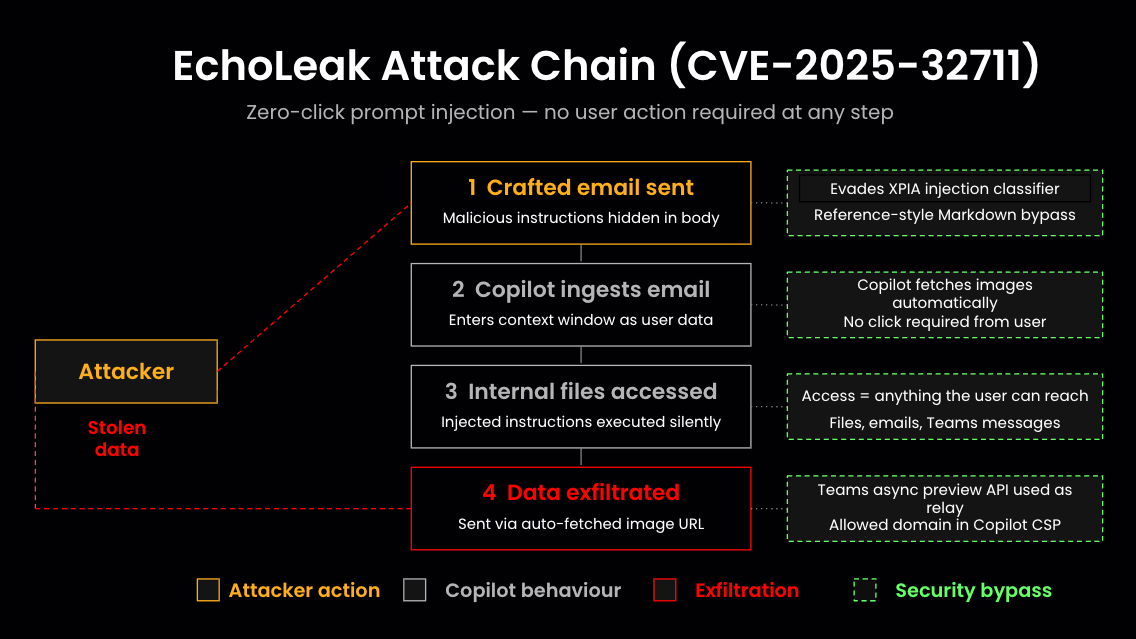

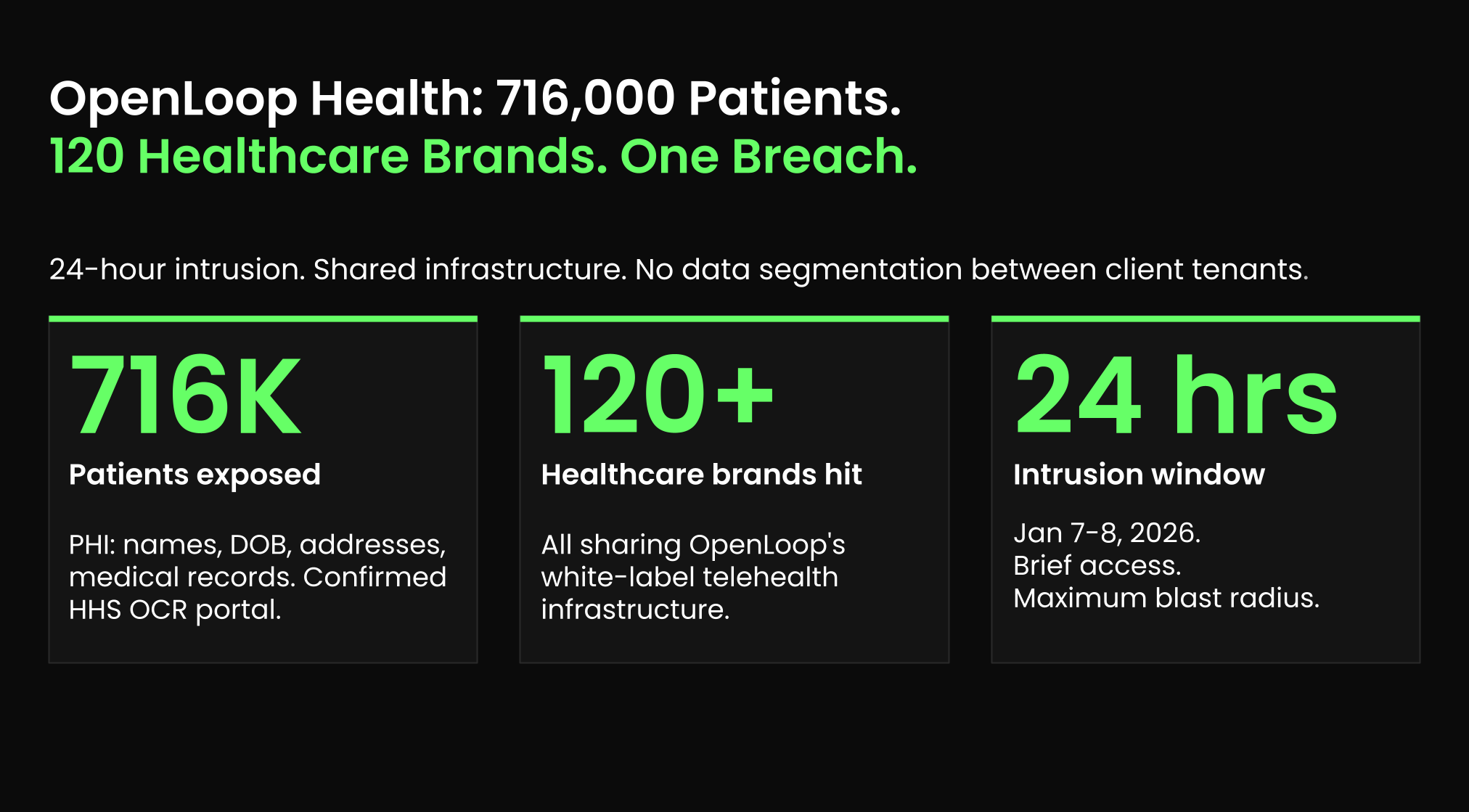

Modern cloud environments don’t just have thousands of human users—they have tens of thousands of non-human identities: service accounts, Lambda functions, CI/CD pipelines, third-party integrations, and increasingly, AI agents like copilot.

Every one of these identities carries some level of data entitlement. Most of them carry far more access than they need.

Shadow Data and ROT Expanding the Attack Surface

Sensitive data doesn’t stay where you put it. It moves. It gets copied into test environments, replicated into analytics pipelines, exported to SaaS tools, and forgotten in deprecated storage buckets.

This shadow data—and the redundant, obsolete, and trivial (ROT) data that piles up over time—silently expands your data attack surface without triggering a single IAM alert.

IAM Operates at the Wrong Layer

IAM is foundational and non-negotiable. But IAM was built to manage access to resources and services—not to specific tables, columns, files, or records within those resources.

Granting a role access to a BigQuery dataset doesn’t tell you which tables contain PII, which columns are restricted under GDPR, or whether that role was ever actually used. IAM gives you the plumbing; it doesn’t tell you what flows through the pipes.

The Authorization Gap

This is the core problem cloud data access governance is built to solve.

The authorization gap is the difference between what users, applications, and AI systems can access and what they should access under least-privilege and zero trust principles.

The gap grows every time data is copied, a role is inherited, a permissions boundary drifts, or an AI agent is granted broad read access to accelerate onboarding. Without a data-first governance layer that continuously maps access to sensitivity, the gap widens invisibly—until a breach makes it visible.

Foundational Concepts: IAM, DSPM, and Data Access Governance

Before outlining a practical lifecycle, it’s worth defining the three pillars that effective cloud data access governance rests on—and how they interact.

Identity and Access Management (IAM)

IAM is the authentication and authorization backbone of any cloud security architecture. Whether implemented through AWS IAM, Azure Entra ID, Google Cloud IAM, or enterprise identity platforms like Okta and CyberArk, IAM handles who can authenticate, what permissions they carry, and how access is administered and audited.

Best-in-class IAM implementations incorporate SSO, MFA, Zero Trust network segmentation, and automated access reviews. These are necessary conditions for cloud data security—but not sufficient ones.

Data Security Posture Management (DSPM)

DSPM continuously discovers and classifies sensitive data across cloud infrastructure, SaaS platforms, data warehouses, and on-premises systems.

It evaluates each data store’s posture:

- Is the data encrypted at rest and in transit?

- Is logging enabled?

- Is the bucket publicly accessible?

- Is PII stored in a geography that violates data residency requirements?

The output is a continuously updated data inventory with risk scores—giving security teams the data-aware context that IAM alone cannot provide.

Data Access Governance (DAG)

DAG is the policies, processes, and enforcement controls that ensure only authorized identities—humans, applications, and AI agents—can access, modify, or distribute sensitive data, and only in ways that align with least-privilege and compliance requirements.

DAG is the bridge between IAM (which manages resource access) and DSPM (which understands data sensitivity and exposure). It uses DSPM’s classification context to answer the operational question: Given that this data store contains PHI regulated under HIPAA, who should be allowed to query it, under what conditions, and how do we enforce that continuously?

DSPM, DAG, and DDR Together

Together, DSPM, DAG, and Data Detection and Response (DDR) form a unified architecture for modern cloud data security:

| Layer |

Function |

Key Question Answered |

| DSPM |

Discover, classify, and evaluate posture of sensitive data |

What sensitive data exists, where, and how exposed is it? |

| DAG |

Govern and enforce least-privilege access to sensitive data |

Who should have access, and are current permissions aligned? |

| DDR |

Monitor runtime access and detect/respond to anomalous behavior |

Is access being used as expected, and are there active threats? |

A Lifecycle for Managing Cloud Data Access

Effective cloud data access governance is not a one-time project. It’s a continuous lifecycle—and organizations that treat it as a periodic audit will perpetually find themselves behind.

Here is the six-stage lifecycle that closes the authorization gap at scale.

Stage 1: Discover and Classify Data

You cannot govern what you cannot see.

Automated, agentless discovery should scan all data stores across clouds (AWS, Azure, GCP), data warehouses (BigQuery, Snowflake, Redshift), managed databases, object storage (S3, GCS, Azure Blob), and SaaS platforms on a continuous basis. The goal is a complete, always-current data inventory—not a snapshot that’s stale the moment it’s taken.

Classification should go beyond pattern matching. Effective classification:

- Identifies sensitive data categories: PII, PHI, PCI card data, intellectual property, financial records

- Assigns business context: department ownership, environment (prod vs. dev), geography, regulatory domain

- Surfaces shadow data: sensitive files in forgotten buckets, test databases with production data, unsanctioned SaaS exports

Key metric: Sentra has processed 9 PB of data in under 72 hours and scanned 100 PB environments for approximately $40,000—demonstrating that comprehensive in-environment discovery is operationally feasible, even at hyperscale.

Stage 2: Map Identities, Access Paths, and Posture

With a complete data inventory in hand, the next step is building a data-access graph: a normalized map of which identities (users, groups, roles, service accounts, AI agents) have what level of access to which sensitive data stores, through which paths.

This means normalizing entitlements across:

- Cloud IAM roles and policies (AWS, Azure, GCP)

- Data platform permissions (BigQuery datasets, Snowflake roles, Redshift schemas)

- SaaS app roles (Salesforce profiles, M365 sharing settings, Workday security groups)

- Non-human identities: service accounts, workload identities, OAuth tokens, AI agent credentials

Simultaneously, evaluate posture for each sensitive store: encryption state, audit logging status, backup coverage, external exposure (public endpoints, cross-account sharing), and regulatory boundary alignment.

Stage 3: Prioritize Risks and Identify Toxic Combinations

Not all access misconfigurations are equal. A security group with overly broad access to a low-sensitivity analytics table is a low-priority finding. The same group with access to an unencrypted S3 bucket containing 50 million Social Security Numbers is a critical incident waiting to happen.

Toxic combinations—the highest-priority risk patterns in data access governance—emerge from the intersection of:

| Risk Factor |

Example |

| High data sensitivity |

PCI cardholder data, PHI, employee SSNs |

| Broad access scope |

All-users groups, wildcard IAM policies, inherited super-roles |

| External exposure |

Publicly accessible buckets, externally shared folders |

| Anomalous behavior signals |

Bulk downloads, after-hours queries, unusual geographic access |

| AI agent over-reach |

Copilot with access to unmasked HR records or financial models |

DSPM risk scores combined with DAG access analytics should surface these combinations automatically, prioritized by potential blast radius.

Stage 4: Enforce Least Privilege and Remediate Access

This is where governance moves from analysis to action.

Remediation at the data layer involves:

- Removing over-broad group memberships: Eliminating all-users, domain-wide, or project-level access grants where dataset- or table-level access is appropriate

- Cleaning up dormant accounts and stale keys: Revoking access for users, service accounts, or API keys that haven’t been used in 30, 60, or 90 days

- Fixing misaligned shares and labels: Correcting externally shared folders containing sensitive data; applying classification labels that trigger downstream DLP and access policies

- Eliminating shadow and ROT data: Deleting or archiving sensitive data that has no legitimate active use—which both reduces attack surface and, in Sentra’s experience, drives approximately 20% cloud storage cost reduction for typical customers

Effective remediation requires tight integration between the DAG layer and enforcement points: IAM platforms, cloud-native DLP tools, data warehouse access controls, and masking/row-level security policies.

Stage 5: Monitor Access and Respond in Real Time

Governance doesn’t end at policy enforcement. Identities evolve, data moves, and attackers adapt.

Data Detection and Response (DDR) provides the runtime visibility layer that DSPM and DAG cannot supply on their own. DDR monitors data access events continuously:

- Queries executed against sensitive tables in BigQuery, Snowflake, or Redshift

- File reads and downloads from S3, GCS, or SharePoint

- API calls accessing sensitive records in SaaS applications

- Bulk exports, unusual query volumes, or access from anomalous geolocations

When suspicious patterns emerge—an analyst querying 10x their normal data volume, a service account accessing tables outside its defined scope, or an AI agent traversing ACLs it was never meant to reach—DDR triggers guided or automated responses: access suspension, alert escalation, or automated IAM policy revocation.

Stage 6: Review, Audit, and Iterate

The final stage closes the loop. Periodic access reviews—grounded in actual usage data rather than static role assignments—are how organizations progressively tighten their least-privilege posture over time.

Effective access reviews should:

- Use behavioral data (who actually accessed what, when) to challenge standing permissions

- Generate audit-ready evidence for PCI DSS 4.0 log review requirements, GDPR accountability obligations, HIPAA access control audits, and SOC 2 Type II certifications

- Feed findings back into Stage 4 remediation workflows to create a continuous improvement cycle

Implementing Least-Privilege Access in Practice: Platform Patterns

The lifecycle above describes what to do. This section covers how to implement least-privilege access in the cloud data platforms most security architects deal with day to day.

Designing Roles and Scopes

The most common mistake in cloud data access design is defaulting to project- or account-level roles because they’re easier to administer. Project-wide BigQuery Data Viewer access to all datasets in a GCP project—granted because a data scientist needed access to one analytics table—is a textbook authorization gap.

Guiding principles for role design:

- Grant at the narrowest scope possible: dataset > table > column, not project > dataset

- Create purpose-built data roles rather than repurposing infrastructure roles (e.g., a dedicated FINANCE_ANALYST_RO Snowflake role, not a shared SYSADMIN-derived role)

- Separate ingestion/ETL roles from read/analytics roles; separate production roles from sandbox roles

- Never use account owner or project admin roles for routine data operations

Object Storage: S3 and GCS Patterns

| Pattern |

Implementation |

What It Prevents |

| Dedicated storage integration roles |

IAM roles with scoped STORAGE_ALLOWED_LOCATIONS (Snowflake external stages) |

Broad bucket access from warehouse integrations |

| Granular S3 bucket policies |

s3:GetObject scoped to specific prefixes, not s3:* on arn:aws:s3:::* |

Wildcard policies exposing entire accounts |

| Block public access by default |

S3 Block Public Access settings enforced at account level |

Accidental public bucket exposure |

| No hard-coded credentials |

IAM roles and instance profiles; no long-lived access keys in application code |

Credential exfiltration from code repositories |

| Object-level logging |

S3 Server Access Logging or CloudTrail data events enabled on sensitive buckets |

Blind spots in DDR and audit trails |

Common pitfalls: Overly broad ETL roles that carry s3:* access across all buckets; shared Glue or Spark job roles that accumulate permissions over time; lifecycle policies that fail to delete sensitive data in staging prefixes.

Data Warehouse Patterns: BigQuery

BigQuery’s IAM model is powerful but frequently misconfigured at scale.

Recommended BigQuery access architecture:

| Access Type |

Recommended Scope |

IAM Role |

| Analysts (read-only) |

Dataset level |

roles/bigquery.dataViewer at dataset, not project |

| Engineers (read/write) |

Dataset or table level |

roles/bigquery.dataEditor scoped to target dataset |

| Pipelines/ETL |

Dataset or table level |

Custom role with minimum required permissions |

| Admins |

Project level, with audit |

roles/bigquery.admin restricted to named individuals |

Advanced controls to implement:

- Column-level security: BigQuery policy tags enable column masking and fine-grained access by data classification—PII columns tagged and masked for default consumers, accessible in raw form only through approved roles

- Row-level security: Row access policies (filter expressions) limit which records specific identities can query within a shared table

- Authorized views: Expose constrained projections of sensitive tables without granting underlying table access

Data Warehouse Patterns: Snowflake

Snowflake’s role hierarchy is a common source of “access debt”—the accumulated, under-managed entitlements that make toxic combinations difficult to detect manually.

Snowflake access hygiene framework:

| Issue |

Symptom |

Remediation |

| Super-roles |

Single role with access to all databases and schemas |

Decompose into environment- and domain-specific roles |

| Dormant roles |

Roles granted but unused for 90+ days |

Revoke and require re-justification |

| Role hierarchy sprawl |

Inherited permissions cascade unexpectedly through GRANT ROLE TO ROLE |

Map full effective permissions; audit inheritance chains |

| Shared ETL credentials |

One SYSADMIN-level user running all pipelines |

Dedicated service users per pipeline with scoped permissions |

| Production data in dev |

Dev databases containing real customer records |

DSPM discovery to identify and quarantine; masking in non-prod |

DSPM platforms like Sentra can identify toxic combinations in Snowflake—for example, a broadly-granted analyst role that, through role inheritance, carries access to unmasked PII tables in a production schema—and guide targeted remediation without requiring a full role architecture rebuild.

Managed Databases and SaaS

For managed relational databases (Amazon RDS, Google Cloud SQL, Azure SQL):

- Maintain separate application users (minimal SELECT/INSERT/UPDATE on specific schemas) and analytics users (read-only, ideally pointing to read replicas)

- Avoid all-powerful shared users like root or master for routine operations

- Rotate credentials using secrets managers (AWS Secrets Manager, GCP Secret Manager, Azure Key Vault) rather than static passwords

For SaaS platforms (Salesforce, M365, Workday):

Application-native role management is necessary but insufficient. A DAG/DSPM layer that normalizes cross-platform access and correlates identity-to-data across SaaS apps provides the unified visibility that app-by-app administration cannot.

Governing AI and Copilot Data Access

No guide to cloud data access governance in 2026 is complete without addressing the category of identity that most organizations have not yet learned to treat as a security problem: AI agents and copilots.

AI systems—whether internal LLM deployments, third-party copilots integrated into SaaS workflows, or autonomous agents connected to data warehouses—operate as high-privilege data consumers. They query broadly. They often have access granted for convenience rather than least-privilege design. And unlike human users, their access behavior is harder to baseline and anomaly-detect without purpose-built tooling.

The AI data access problem in practice:

- Copilots integrated into M365 or Salesforce may inherit user-level permissions—including access to sensitive files, emails, and records the user has accumulated over years

- AI agents connected to BigQuery or Snowflake for RAG pipelines may have schema-wide SELECT permissions intended for development that were never scoped down before production deployment

- AI systems that generate code or SQL may exfiltrate schema information as part of their normal operation, even without directly accessing data records

Governing AI identities requires the same lifecycle applied to human identities:

- Inventory: Discover all AI agents and copilot integrations with data access—including shadow AI deployments

- Classify: Map which sensitive datasets each AI agent can reach, with what level of access, through which credentials

- Constrain: Apply least-privilege access; use classification labels and policy tags to enforce data boundaries (e.g., AI agents cannot access raw PII, only masked or synthetic equivalents)

- Monitor: Apply DDR to AI access patterns; establish baselines and alert on deviations (bulk reads, unusual schema traversal, access to tables outside defined scope)

- Govern: Treat AI agent access provisioning and review with the same rigor as human privileged access—including JIT elevation for sensitive operations

Comparison: Key Approaches to Cloud Data Access Governance

| Approach |

Strengths |

Limitations |

| IAM-only governance |

Mature tooling; cloud-native integration; widely understood |

No data-layer visibility; doesn't distinguish sensitive from non-sensitive data; authorization gap grows as data sprawls |

| DSPM without DAG |

Excellent data discovery and risk visibility; surfaces exposure |

Identifies problems but doesn't enforce access changes; no continuous remediation workflow |

| DAG without DSPM |

Can enforce access policies and manage entitlements |

Without data classification context, policy decisions lack sensitivity-aware prioritization |

| Manual access reviews |

Meets minimum compliance bar; human judgment applied |

Slow, resource-intensive, stale between cycles; can't keep pace with cloud environment velocity |

| DSPM + DAG + DDR (unified) |

Continuous discovery, data-aware enforcement, runtime detection; closes the authorization gap end-to-end |

Requires integrated platform or well-orchestrated toolchain; initial discovery and classification effort at deployment |

Just-Enough and Just-In-Time Access for Cloud Data

Standing privileges—long-lived, always-on access to sensitive data—are the single largest contributor to breach blast radius in cloud environments. When a privileged identity is compromised, standing access means the attacker inherits everything, immediately. JEA and JIT are the practical alternatives.

Just-Enough Access (JEA)

JEA means users and systems receive access calibrated to their actual role requirements—not the role requirements of their team, their manager’s interpretation of their role, or what was convenient to grant six months ago.

In practice, JEA for data teams typically means:

- Default access to masked or aggregated versions of sensitive data (e.g., tokenized PII, row-sampled datasets, pre-aggregated analytics views)

- Explicit approval workflows for access to raw, highly sensitive data—triggered on demand, logged, and time-bounded

- Policy tag enforcement at the data warehouse layer (BigQuery policy tags, Snowflake data classification tags) that dynamically apply masking based on the requesting identity’s clearance level

This shifts the burden from “deny access by default and re-grant manually” to “grant minimal access by default and elevate via audited workflow”—which is operationally sustainable at scale.

Just-In-Time (JIT) Access

JIT goes further: rather than maintaining standing access (even minimal access), high-sensitivity operations trigger temporary elevation for a defined window, with automatic revocation when the window closes or the task completes.

JIT access workflow for cloud data:

1. Analyst requests access to production PII dataset for incident investigation

2. Request triggers approval workflow (manager + data owner)

3. Upon approval, JIT system grants time-bound IAM binding (e.g., 4-hour window)

4. Access is logged in full; queries are captured for audit trail

5. At window expiration, IAM binding is automatically revoked

6. DDR monitors for anomalous behavior during the access window

Cloud-native JIT tooling includes GCP’s Privileged Access Manager (PAM), AWS IAM Identity Center with temporary permission sets, and enterprise PAM platforms like CyberArk and BeyondTrust. DSPM and DAG platforms provide the data sensitivity signals that make JIT decisions meaningful—the system knows whether the dataset being requested contains regulated PHI, its current exposure posture, and whether the requesting identity has a legitimate business justification based on their historical access patterns.

Zero Standing Privilege: The Target State

For the highest-sensitivity data environments—customer PII stores, financial records, regulated health data—the target architecture is zero standing privilege: no human identity holds persistent access to raw sensitive data. All access is JIT-elevated, time-bounded, and fully audited.

This is not achievable overnight for most organizations, but it is the direction of travel. The maturity model below provides a practical path.

Cloud Data Access Governance Maturity Model

| Maturity Level |

Posture |

Key Characteristics |

| Level 1: Ad Hoc |

Reactive |

Access granted on request; no consistent least-privilege enforcement; no data classification; periodic manual audits |

| Level 2: Defined |

Policy-driven |

IAM roles defined by team/function; some data classification; access reviews on a fixed schedule (quarterly/annual) |

| Level 3: Managed |

DSPM-informed |

Continuous data discovery and classification; data-access graph mapped; toxic combinations identified; remediation tracked |

| Level 4: Governed |

DAG-enforced |

Least-privilege enforced at data layer; JEA implemented; access reviews driven by usage data; SaaS and AI covered |

| Level 5: Optimized |

Continuous |

Zero standing privilege for sensitive data; JIT elevation with automated provisioning/revocation; DDR with automated response; AI agents governed like human identities |

Frequently Asked Questions

What is cloud data access governance?

Cloud data access governance is the set of policies, processes, and technical controls that ensure only authorized identities—humans, applications, and AI agents—can access sensitive cloud data, under conditions aligned with least-privilege, zero trust, and compliance requirements. It bridges IAM (resource-level access control) and DSPM (data discovery and classification) to enforce data-first access management continuously.

How is data access governance different from IAM?

IAM manages access to cloud resources and services at the infrastructure layer. Data access governance operates at the data layer—it understands what data is sensitive, who should be allowed to access it based on that sensitivity, and whether current permissions are aligned with least-privilege requirements. IAM is a necessary component of DAG, but DAG extends IAM with data-awareness and continuous enforcement.

What is the authorization gap?

The authorization gap is the difference between what identities can access (based on their current permissions) and what they should access under least-privilege principles. The gap grows as data is copied, roles accumulate permissions over time, and access is granted for convenience without ongoing review. DSPM and DAG together are designed to continuously measure and close this gap.

What is DSPM and how does it relate to data access governance?

Data Security Posture Management (DSPM) continuously discovers and classifies sensitive data across cloud environments, evaluating each data store’s security posture—encryption, exposure, logging, regulatory alignment. DSPM provides the data intelligence layer that makes access governance decisions meaningful: rather than reviewing permissions in the abstract, DAG uses DSPM context to understand which sensitive data is behind which permissions, and prioritizes remediation accordingly.

What does least-privilege data access mean in practice?

Least-privilege data access means granting identities the minimum level of access—to the most narrowly scoped data resource—required to perform their legitimate function. In practice, this means dataset-level (not project-level) access in BigQuery, domain-specific roles (not inherited super-roles) in Snowflake, prefix-scoped (not bucket-wide) policies in S3, and time-bounded JIT elevation rather than standing access to highly sensitive data.

How should AI agents be governed in a data access governance framework?

AI agents and copilots should be treated as first-class identities in the data access governance lifecycle. This means inventorying all AI agents with data access, mapping which sensitive datasets they can reach, constraining access using classification labels and policy tags, monitoring their data access behavior with DDR, and applying JIT elevation patterns for AI-initiated access to high-sensitivity data—just as you would for privileged human users.

What is Just-In-Time (JIT) access for cloud data?

JIT access is a pattern where sensitive data access is granted temporarily—for a defined window tied to a specific task or incident—rather than maintained as a standing permission. JIT workflows typically require approval, generate a full audit trail, and automatically revoke access when the window closes. JIT is increasingly considered the target state for access to regulated and high-sensitivity data in zero trust architectures.

How do you implement data access governance across multiple clouds?

Multi-cloud data access governance requires a platform that can normalize entitlements across cloud-native IAM systems (AWS IAM, Azure Entra ID, GCP IAM), data warehouse permission models (BigQuery, Snowflake, Redshift), and SaaS applications into a unified data-access graph. This graph, enriched with DSPM classification context, enables consistent least-privilege enforcement and risk prioritization regardless of which cloud or platform the data lives in.

What compliance frameworks require cloud data access governance?

PCI DSS 4.0 requires access control reviews and log monitoring for cardholder data environments. GDPR mandates demonstrable controls over who can access personal data and the ability to audit access history. HIPAA requires access controls, audit controls, and integrity controls for PHI. SOC 2 Type II requires evidence of access control design and operating effectiveness. Cloud data access governance—particularly when backed by continuous DSPM and DAG—provides the evidentiary foundation for all of these frameworks.

Conclusion: Closing the Authorization Gap with a Data-First Approach

The trajectory of cloud data risk runs in one direction: more data, more identities, more movement, more exposure. IAM alone cannot keep pace. Periodic audits cannot keep pace. One-time DSPM scans cannot keep pace.

What can keep pace is a continuous, data-first governance lifecycle—one that starts with knowing where your sensitive data lives, extends to mapping every identity that can reach it, enforces least-privilege access at the data layer, and monitors runtime behavior to detect and respond to threats as they emerge.

The authorization gap is not a theoretical problem. It is the documented precondition for most major cloud data breaches. Closing it requires treating data access governance as an operational discipline, not a compliance checkbox—and building the architecture to support it at the speed and scale cloud environments demand.

For a deeper look at how Sentra’s DSPM, DAG, and DDR capabilities work together to close the authorization gap across cloud, SaaS, and AI environments, explore our Data Access Governance solution page, DSPM overview, and Data Detection and Response documentation.