Nikki Ralston

Nikki Ralston is Senior Product Marketing Manager at Sentra, with over 20 years of experience bringing cybersecurity innovations to global markets. She works at the intersection of product, sales, and markets translating complex technical solutions into clear value. Nikki is passionate about connecting technology with users to solve hard problems.

Name's Data Security Posts

What Does AI Data Readiness Actually Look Like at Scale? Lyft, SoFi, and Expedia Will Demonstrate at Gartner SRM 2026

What Does AI Data Readiness Actually Look Like at Scale? Lyft, SoFi, and Expedia Will Demonstrate at Gartner SRM 2026

Most organizations I talk to have the same answer when I ask what their AI sees: "We're not entirely sure."

That's not a technology problem. It's a data governance problem - and it's the most consequential unsolved problem in enterprise security right now.

AI doesn't discriminate. Copilot, cloud-based agents, internal LLMs, can access everything their users can access, and synthesize it in seconds. Years of overpermissioned, unclassified data that security teams have been meaning to clean up is now directly in the path of AI systems that move faster than any previous tool your organization has deployed.

The good news is some organizations have actually solved this. At the Gartner Security & Risk Management Summit this June, three of them are sharing exactly how.

The AI Data Readiness Problem Is Bigger Than Most Teams Realize

Here's what I see repeatedly across security programs. Organizations are deploying AI faster than they're governing the data underneath it.

The data estate didn't get cleaned up before Copilot rolled out. Shadow data stores weren't fully catalogued before the internal agent went live. Classification policies that worked fine for DLP weren't built to handle the access patterns that AI introduces.

When AI systems traverse a knowledge base, they don't stay in their lane - they surface whatever they can reach. If sensitive customer records, financial data, or PII are accessible to a user, they're accessible to that user's AI tools. And AI doesn't just retrieve; it synthesizes and presents, which means the exposure risk compounds.

Governing AI data readiness means knowing three things with accuracy and continuity:

What sensitive data exists and where it lives. Not from a six-month-old scan. From a continuously maintained inventory that reflects the environment as it actually is today.

Who and what can access it. Not just humans; AI agents, service accounts, automated pipelines. The access surface for AI is substantially wider than traditional access models account for.

Whether it's classified correctly before AI touches it. Classification is the foundation. It's what DLP runs on. It's what Copilot safety controls enforce against. If the labels are wrong or missing, every downstream control fails.

Expedia operates 450 petabytes of cloud data. Lyft and SoFi each manage 70+ petabytes. These aren't edge cases — they're the environments where AI data readiness problems are biggest, and where solving them produces the most visible results.

What You'll Hear at Gartner SRM 2026

Sentra is at Gartner SRM all week — June 1 through 3 at National Harbor — and we've built the week around the practitioners who've done this work, not around slides about why it matters.

Here's what's on the calendar.

Wednesday, June 3: Gartner Solution Provider Session

From Data Risk to AI Ready: The Lyft & Expedia Playbook 11:15–11:45 AM | Gartner Solution Provider Stage | Maryland C Ballroom

Hear from the Lyft CISO and Expedia on how they tackled the AI data readiness challenge in 100+ petabyte environments - classifying, governing, and securing the data sprawl already in the path of their AI initiatives. As AI proliferates across the enterprise, the data underneath it becomes the greatest unmanaged risk. In this session, experts share the decisions, tradeoffs, and tools that built their foundation - and what it made possible at scale. Walk away knowing the data readiness essentials so your AI initiative succeeds.

If you're at Gartner SRM this is the one solution provider session you won’t want to miss on Wednesday.

Use the Garter Agenda App to register for:

From Data Risk to AI Ready: The Lyft & Expedia Playbook

11:15–11:45 AM, Wednesday June 11,2026

Monday–Wednesday Morning Roundtables

Invite-Only Breakfast Sessions | Sentra Meeting Suite

These small-group sessions are the intimate version of the stage conversation — tailored to the specific attendee group, with real back-and-forth on what's working and what isn't.

Monday, June 1 | 8:00–8:45 AM (Breakfast) Lyft CISO Chaim Sanders on how Lyft built continuous data readiness and governance in a 70+ petabyte environment. How they classified at scale, where they found the unexpected exposure, and what they'd do differently.

Tuesday, June 2 | 8:00–8:45 AM (Breakfast) Expedia Distinguished Architect Payam Chychi on governing a 450-petabyte environment — the sprawl problem, the AI data access challenge, and the architecture decisions that made classification actionable.

Wednesday, June 3 | 8:00–8:45 AM (Breakfast) SoFi Sr. Manager of Product Security Engineering Zach Schulze on making 70+ PB of cloud data AI-ready — including how they combined Sentra DSPM with Wiz CSPM to reduce noise and govern safely.

Seats are limited and these sessions fill fast. Register at the Gartner SRM 2026 event page →

Tuesday, June 2: CISO Executive Dinner

7:30–9:30 PM | Grace's Mandarin | National Harbor

An invitation-only dinner with a small group of security leaders, including the Lyft CISO and security teams from Expedia and SoFi. Small tables. No presentations. The kind of conversation that only happens when the right people are in the right room.

If you'd like to be considered for an invitation, reach out directly via the event page or connect with your Sentra contact.

Monday–Wednesday: Executive 1:1 Briefings

8:00 AM–5:00 PM | Sentra Private Meeting Suite

For security leaders who want to apply the Lyft, SoFi, and Expedia learnings to their own environment — what AI readiness actually means given your data estate, your AI initiatives, and where your exposure lives. Sessions are led by Sentra's head of product or customer implementations. No slides. Just the right conversation.

All Week: Live Demos at Booth #222

See how Sentra discovers, classifies, and secures the data already in the path of your AI. The demo is built around your questions — bring the hard ones. The team onsite has worked with some of the largest data environments in the world.

Why This Matters Right Now

Gartner SRM is the right venue for this conversation, and 2026 is the right year to have it.

AI deployment accelerated faster than most security teams anticipated. The governance frameworks, classification foundations, and access controls that data-driven AI requires were, in many cases, not in place when the rollout happened. Now those teams are working backward — trying to understand what their AI can actually reach, and whether the data feeding it is classified accurately enough to trust.

The organizations presenting at our events this week tackled this problem at a scale that most enterprises haven't reached yet. What they learned applies regardless of environment size: classification has to happen before AI touches the data, not after. The inventory has to reflect reality continuously, not periodically. And governing AI access requires a fundamentally different approach than governing human access.

If you're at Gartner SRM and this is the problem your organization is working on, the sessions above are worth your time.

See the full schedule and register at sentra.io/gartner-srm-2026 →

DLP False Positives Are Drowning Your Security Team: How to Cut Noise with DSPM

DLP False Positives Are Drowning Your Security Team: How to Cut Noise with DSPM

Ask any security engineer how they feel about DLP alerts and you’ll usually get the same reaction. They are drowning in them. Over the last decade, DLP has built a reputation for noisy alerts, rigid rules, and confusing dashboards that bury real risk under a mountain of “maybe” events.

Teams roll out endpoint, email, and network DLP, wire in SaaS connectors, and import standard PCI/PII templates. Within weeks, analysts are triaging hundreds of alerts a day, most of which turn out to be benign. Business users complain that normal work is blocked, so policies get carved up with exceptions or quietly disabled. Meanwhile, the most sensitive data quietly spreads into collaboration tools, cloud storage, and AI workflows that DLP never sees.

The problem is that DLP is being asked to do too much on its own: discover sensitive data, understand its business context, and enforce policies in motion, all from a narrow view of each channel. To fix false positives in a durable way, you have to stop treating DLP as the brain of your data security program and give it an actual data-intelligence layer to work with.

That’s the role of modern Data Security Posture Management (DSPM).

Why Traditional DLP Can Be So Noisy

Most DLP engines still lean heavily on pattern matching and static rules. They look for strings that resemble card numbers, social security numbers, or keywords, and they try to infer “sensitive vs. not” from whatever they can see in a single email, file, or HTTP transaction. That approach might have been tolerable when most sensitive data sat in a few on‑prem systems, but it doesn’t scale to multi‑cloud, SaaS, and AI‑driven environments.

In practice, three things tend to go wrong:

First, DLP rarely has full visibility. Sensitive data now lives in cloud data lakes, SaaS apps, shared drives, ticketing systems, and AI training sets. Many of those locations are either out of reach for traditional DLP or only partially covered.

Second, the rules themselves are crude. A nine‑digit number might be a government ID, or it might be an internal ticket number. A CSV export might be an innocuous test file or a real production dump. Without a shared understanding of what the data actually represents, rules fire on look‑alikes and miss real exposures.

Third, each DLP product, the endpoint agent, the email gateway, the CASB, tries to solve classification locally. You end up with inconsistent detections and competing definitions of “sensitive” that don’t match what the business actually cares about. When you add those up, it’s no surprise that false positives consume so much analyst time and so much political capital with the business.

How DSPM Changes the Equation

DSPM was designed to separate what DLP has been trying to do into dedicated layers. Instead of asking DLP to discover, classify, and enforce all at once, DSPM owns discovery and classification, and DLP focuses on enforcement.

A DSPM platform like Sentra connects directly, via APIs and in‑environment scanning, to your cloud, SaaS, and on‑prem data stores. It builds a unified inventory of data, then uses AI‑driven models and domain‑specific logic to decide:

- What is this object?

- How sensitive is it?

- Which regulations or policies apply?

- Who or what can currently access it?

From there, DSPM applies consistent labels to that data, often using frameworks like Microsoft Purview Information Protection (MPIP) so labels are understood by other tools. Those labels are then pushed into your DLP stack, SSE/CASB, and email and endpoint controls, so every enforcement point is working from the same definition of sensitivity, instead of guessing on the fly.

Once DLP is enforcing on clear labels and context, rather than raw patterns, you no longer need dozens of almost‑duplicate rules per channel. Policies become simpler and more precise, which is what allows teams to realistically drive false positives down by up to half or more.

A Practical Approach to Cutting DLP Noise

If your security team is exhausted by DLP alerts today, you don’t need another round of regex tuning. You need a change in operating model. A pragmatic sequence looks like this.

Start by measuring the problem instead of just reacting to it. Capture how many DLP alerts you see per week, how many of those are ultimately dismissed, and how much analyst time they consume. Pay special attention to the policies and channels that generate the most noise, because that’s where you’ll see the biggest benefit from a DSPM‑driven approach.

Next, work with DSPM to turn your noisiest rules into label‑driven policies. Instead of “block any message that looks like it contains a card number,” express the rule as “block files labeled PCI sent to personal domains” or “quarantine emails carrying PHI labels to unapproved partners.” Once Sentra or another DSPM platform is reliably applying those labels, DLP simply has to enforce on them.

Then, add business context. The same file can be benign in one context and dangerous in another. Combine labels with identity, role, channel, and basic behavior signals like, time of day, destination, volume, etc., so that only genuinely suspicious events result in hard blocks or escalations. A finance export labeled ‘Confidential’ going to an approved auditor should not be treated the same as that export leaving for an unknown Gmail account at midnight.

Finally, create a feedback loop. Allow analysts to flag alerts as false positives or misconfigurations, and give users controlled ways to override with justification in edge cases. Feed that information back into DSPM tuning and DLP policies at a regular cadence, so your classification and rules get closer to how the business actually operates.

Over time, you’ll find that you write fewer DLP rules, not more. The rules you do have are easier to explain to stakeholders. And most importantly, your analysts spend their time on true positives and meaningful insider‑risk investigations, not on the hundredth low‑value alert of the week.

At that point, you haven’t just made DLP tolerable. You’ve turned it into a quiet, reliable enforcement layer sitting on top of a data‑intelligence foundation.

<blogcta-big>

How to Protect Sensitive Data in Azure

How to Protect Sensitive Data in Azure

As organizations migrate critical workloads to the cloud in 2026, understanding how to protect sensitive data in Azure has become a foundational security requirement. Azure offers a deeply layered security architecture spanning encryption, key management, data loss prevention, and compliance enforcement. This article breaks down each layer with technical precision, so security teams and architects can make informed decisions about safeguarding their most valuable data assets.

Azure Data Protection: A Layered Security Model

Azure's approach to data protection relies on multiple overlapping controls that work together to prevent unauthorized access, accidental modification, and data loss.

Storage-Level Encryption and Access Controls

Azure Storage Service Encryption (SSE) and Azure disk encryption options automatically protect data using AES-256, meeting FIPS 140-2 compliance standards across core services such as Azure Storage, Azure SQL Database, and Azure Data Lake.

All managed disks, snapshots, and images are encrypted by default using SSE with service-managed keys, and organizations can switch to customer-managed keys (CMKs) in Azure Key Vault when they need tighter control.

Azure Resource Manager locks, available in CanNotDelete and ReadOnly modes, prevent accidental deletion or configuration changes to critical storage accounts and other resources.

Immutability, Recovery, and Redundancy

- Immutability policies on Azure Blob Storage ensure data cannot be overwritten or deleted once written, which is valuable for regulatory compliance scenarios like financial records or audit logs.

- Soft delete retains deleted containers, blobs, or file shares in a recoverable state for a configurable period.

- Blob versioning and point-in-time restore allow rollback to earlier states to recover from logical corruption or accidental changes.

- Redundancy options, including LRS, ZRS, and cross-region options like GRS/GZRS—protect against hardware failures and regional outages.

Microsoft Defender for Storage further strengthens this model by detecting suspicious access patterns, malicious file uploads, and potential data exfiltration attempts across storage accounts.

Azure Encryption at Rest and in Transit

Encryption at Rest

Azure uses an envelope encryption model where a Data Encryption Key (DEK) encrypts the actual data, while a Key Encryption Key (KEK) wraps the DEK. For customer-managed scenarios, KEKs are stored and managed in Azure Key Vault or Managed HSM, while platform-managed keys are handled by Microsoft.

AES-256 is the default encryption algorithm across Azure Storage, Azure SQL Database, and Azure Data Lake for server-side encryption.

Transparent Data Encryption (TDE) applies this protection automatically for Azure SQL Database and Azure Synapse Analytics data files, encrypting data and log files in real time using a DEK protected by a key hierarchy that can include customer-managed keys.

For compute, encryption at host provides end-to-end encryption of VM data—including temporary disks, ephemeral OS disks, and disk caches - before it’s written to the underlying storage, and is Microsoft’s recommended option going forward as Azure Disk Encryption is phased out over time.

Encryption in Transit

Azure enforces modern transport-level encryption across its services:

- TLS 1.2 or later is required for encrypted connections to Azure services, with many services already enforcing TLS 1.2+ by default.

- HTTPS is mandatory for Azure portal interactions and can be enforced for storage REST APIs through the “secure transfer required” setting on storage accounts.

- Azure Files uses SMB 3.0 with built-in encryption for file shares.

- At the network layer, MACsec (IEEE 802.1AE) encrypts traffic between Azure datacenters, providing link-layer protection for traffic that leaves a physical boundary controlled by Microsoft.

- Azure VPN Gateways support IPsec/IKE (site-to-site) and SSTP (point-to-site) tunnels for hybrid connectivity, encrypting traffic between on-premises and Azure virtual networks.

- For sensitive columns in Azure SQL Database, Always Encrypted ensures data is encrypted within the client application before it ever reaches the database server.

A simplified view:

| Scenario | Encryption Method | Algorithm / Protocol |

|---|---|---|

| Storage (blobs, files, disks) | Azure Storage Service Encryption | AES-256 (FIPS 140-2) |

| Databases | Transparent Data Encryption (TDE) | AES-256 + RSA-2048 (CMK) |

| Virtual machine disks | Encryption at host / Azure Disk Encryption | AES-256 (PMK or CMK) |

| Data in transit (services) | TLS/HTTPS | TLS 1.2+ |

| Data center interconnects | MACsec | IEEE 802.1AE |

| Hybrid connectivity | VPN Gateway | IPsec/IKE, SSTP |

Azure Key Vault and Advanced Key Management

Encryption is only as strong as the key management strategy behind it. Azure Key Vault, Managed HSM, and related HSM offerings are the central services for storing and managing cryptographic keys, secrets, and certificates.

Key options include:

- Service-managed keys (SMK): Microsoft handles key generation, rotation, and backup transparently. This is the default for many services and minimizes operational overhead.

- Customer-managed keys (CMK): Organizations manage key lifecycles, rotation schedules, access policies, and revocation in Key Vault or Managed HSM, and can bring their own keys (BYOK).

- Hardware Security Modules (HSMs): Tamper-resistant hardware key storage for workloads that require FIPS 140-2 Level 3-style assurance, common in financial services and healthcare.

Azure supports automatic key rotation policies in Key Vault, reducing the operational burden of manual rotation. When using CMKs with TDE for Azure SQL Database, a Key Vault key (commonly RSA-2048) serves as the KEK that protects the DEK, adding a layer of customer-controlled governance to database encryption.

Azure Encryption at Host for Virtual Machines

Encryption at host extends Azure’s encryption coverage down to the VM host layer, ensuring that:

- Temporary disks, ephemeral OS disks, and disk caches are encrypted before they’re written to physical storage.

- Encryption is applied at the Azure infrastructure level, with no changes to the guest OS or application stack required.

- It supports both platform-managed keys and customer-managed keys via Key Vault, including automatic rotation.

This model is particularly important for regulated workloads (e.g., EHR systems, payment processing, or financial transaction logs) where even transient data on caches or temporary disks must be protected. It also reduces the risk of configuration drift that can occur when encryption is managed individually at the OS or application layer. As Azure Disk Encryption is gradually retired, encryption at host is the recommended default for new VM-based workloads.

Data Loss Prevention in and Around Azure

Encryption protects data at rest and in transit, but it does not prevent authorized users from mishandling or leaking sensitive information. That’s the role of data loss prevention (DLP).

In Microsoft’s ecosystem, DLP is primarily delivered through Microsoft Purview Data Loss Prevention, which applies policies across:

- Microsoft 365 services such as Exchange Online, SharePoint Online, OneDrive, and Teams

- Endpoints via endpoint DLP

- On-premises repositories and certain third-party cloud apps through connectors and integration with Microsoft Defender and Purview capabilities

How DLP Policies Work

DLP policies use automated content analysis - keyword matching, regular expressions, and machine learning-based classifiers - to detect sensitive information such as financial records, health data, and PII. When a violation is detected, policies can:

- Warn users with policy tips

- Require justification

- Block sharing, copying, or uploading actions

- Trigger alerts and incident workflows for security and compliance teams

Policies can initially run in simulation/audit mode so teams can understand impact before switching to full enforcement.

DLP and AI / Azure Workloads

For AI workloads and Azure services, DLP is part of a broader control set:

- Purview DLP governs content flowing through Microsoft 365 and integrated services that may feed AI assistants and copilots.

- On Azure resources such as Azure OpenAI, you use a combination of:

- Network restrictions (restrictOutboundNetworkAccess, private endpoints, NSGs, and firewalls) to prevent services from calling unauthorized external endpoints.

- Microsoft Defender for Cloud policies and recommendations for monitoring misconfigurations, exposed endpoints, and suspicious activity.

- Audit logging to verify that sensitive data is not being transmitted where it shouldn’t be.

Together, these capabilities give you both content-centric controls (DLP) and infrastructure-level controls (network and posture management) for AI workloads.

Compliance, Monitoring, and Ongoing Governance

Meeting regulatory requirements in Azure demands continuous visibility into where sensitive data lives, how it moves, and who can access it.

- Azure Policy enforces configuration baselines at scale: ensuring encryption is enabled, secure transfer is required, TLS versions are restricted, and storage locations meet regional requirements.

- For GDPR, you can use policy to restrict data storage to approved EU regions; for HIPAA, you enforce audit logging, encryption, and access controls on systems that handle PHI.

- Periodic audits should verify:

- Encryption is enabled across all storage accounts and databases.

- Key rotation schedules for CMKs are in place and adhered to.

- DLP policies cover intended data types and locations.

- Role-based access control (RBAC) and Privileged Identity Management (PIM) are used to maintain least-privilege access.

Azure Monitor and Microsoft Defender for Cloud provide real-time visibility into encryption status, access anomalies, misconfigurations, and policy violations across your subscriptions.

How Sentra Complements Azure's Native Controls

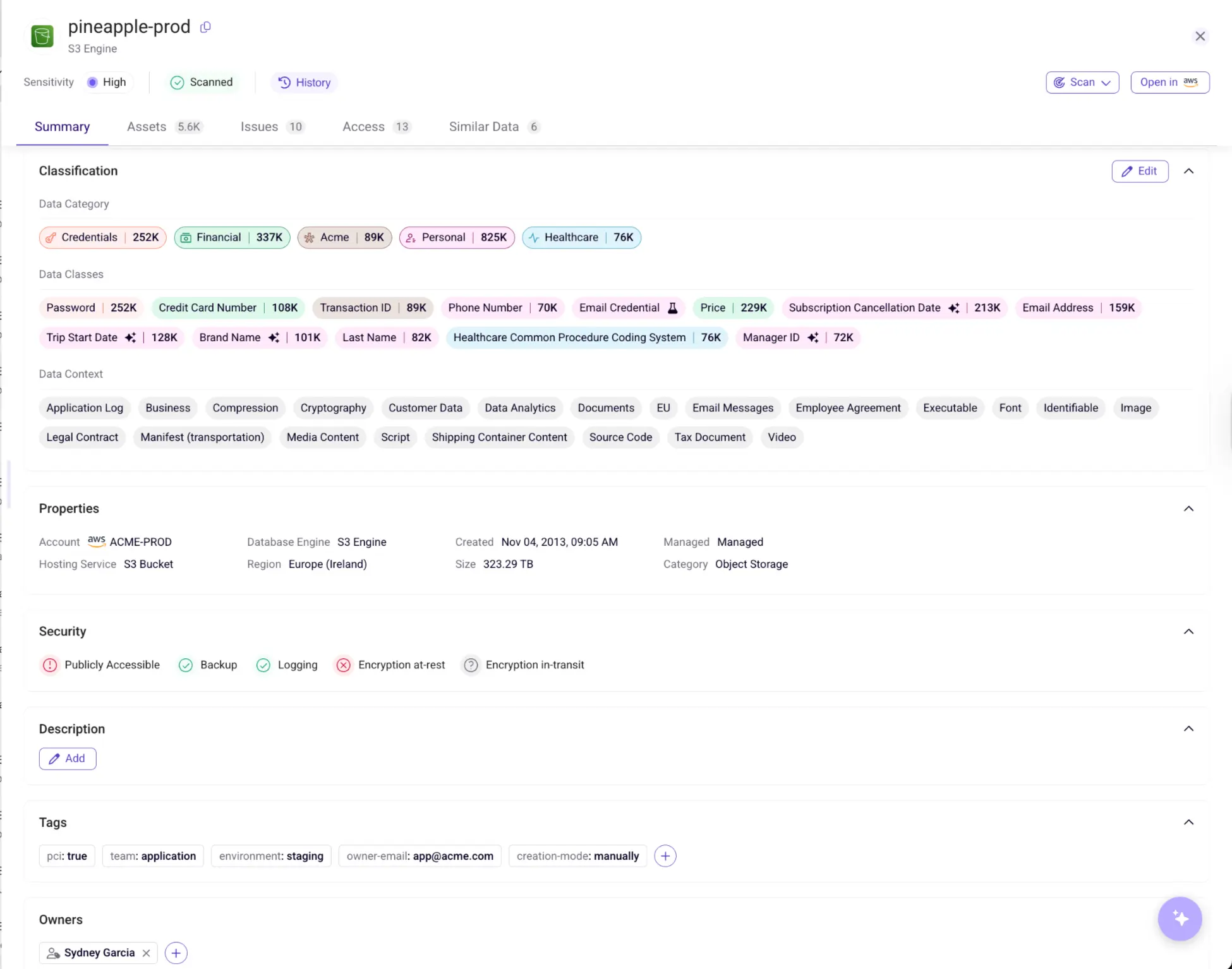

Sentra is a cloud-native data security platform that discovers and governs sensitive data at petabyte scale directly inside your Azure environment - data never leaves your control. It provides complete visibility into:

- Where sensitive data actually resides across Azure Storage, databases, SaaS integrations, and hybrid environments

- How that data moves between services, regions, and environments, including into AI training pipelines and copilots

- Who and what has access, and where excessive permissions or toxic combinations put regulated data at risk

Sentra’s AI-powered discovery and classification engine integrates with Microsoft’s ecosystem to:

- Feed high-accuracy labels and data classes into tools like Microsoft Purview DLP, improving policy effectiveness

- Enforce data-driven guardrails that prevent unauthorized AI access to sensitive data

- Identify and help eliminate shadow, redundant, obsolete, or trivial (ROT) data, typically reducing cloud storage costs by around 20% while shrinking the overall attack surface.

Knowing how to protect sensitive data in Azure is not a one-time configuration exercise; it is an ongoing discipline that combines strong encryption, disciplined key management, proactive data loss prevention, and continuous compliance monitoring. Organizations that treat these controls as interconnected layers rather than isolated features will be best positioned to meet current regulatory demands and the emerging security challenges of widespread AI adoption.

<blogcta-big>

Best Cloud Data Security Solutions for 2026

Best Cloud Data Security Solutions for 2026

As enterprises scale cloud workloads and AI initiatives in 2026, cloud data security has become a board‑level priority. Regulatory frameworks are tightening, AI assistants are touching more systems, and sensitive data now spans IaaS, PaaS, SaaS, data lakes, and on‑prem.

This guide compares four of the leading cloud data security solutions - Sentra, Wiz, Prisma Cloud, and Cyera - across:

- Architecture and deployment

- Data movement and “toxic combination” detection

- AI risk coverage and Copilot/LLM governance

- Compliance automation and real‑world user sentiment

| Platform | Core Strength | Deployment Model | AI & Data Risk Coverage |

|---|---|---|---|

| Sentra | In-environment DSPM and AI-aware data governance, with strong focus on regulated data and unstructured stores | Purely agentless, in-place scanning in your cloud and data centers; optional lightweight on-prem scanners for file shares and databases | Shadow AI detection, M365 Copilot and AI agent inventory, data-flow mapping into AI pipelines, and guardrails for cloud and SaaS data |

| Wiz | Cloud-native CNAPP and Security Graph tying together data, identity, and cloud posture | Primarily agentless via cloud provider APIs and snapshots, with optional eBPF sensor for runtime context | Data lineage into AI pipelines via its security graph; AI exposure surfaced alongside misconfigurations and identity risk |

| Prisma Cloud | Code-to-cloud security, infrastructure risk, and compliance across multi-cloud | Hybrid: agentless scanning plus optional agents/sidecars for deep runtime protection | Tracks data movement into AI pipelines as part of attack-path analysis and compliance checks |

| Cyera | AI-native data discovery with converged DLP + DSPM for cloud data | Agentless, in-place scanning using local inspection or snapshots | AISPM and AI runtime protection for prompts, responses, and agents across SaaS and cloud environments |

What Users Are Saying

Review platforms and field conversations surface patterns that go beyond feature matrices.

Sentra

Pros

- Strong shadow data discovery, including legacy exports, backups, and unstructured sources like chat logs and call transcripts that other tools often miss

- Built‑in compliance facilitation that reduces audit prep time for healthcare, financial services, and other regulated industries

- In‑environment architecture that consistently appeals to privacy, risk, and data protection teams concerned about data residency and vendor data handling

Cons

- Dashboards and reporting are powerful but can feel dense for first‑time users who aren’t familiar with DSPM concepts

- Third‑party integrations are broad, but some connectors can lag when synchronizing very large environments

Wiz

Pros

- Excellent multi‑cloud visibility and security graph that correlate misconfigurations, identities, and data assets for fast remediation

- Well‑regarded customer success and responsive support teams

Cons

- High alert volume if policies aren’t carefully tuned, which can overwhelm small teams

- Configuration complexity grows with environment size and number of integrations

Prisma Cloud

Pros

- Strong real‑time threat detection tightly coupled with major cloud providers, well suited to security operations teams

- Proven scalability across large, hybrid environments combining containers, VMs, and serverless workloads

Cons

- Cost is frequently cited as a concern in large‑scale deployments

- Steeper learning curve that often requires dedicated training and ownership

Cyera

Pros

- Smooth, agentless deployment with quick time‑to‑value for data discovery in cloud stores

- Highly responsive support and strong focus on classification quality

Cons

- Integration and operationalization complexity in larger enterprises, especially when folding into wider security workflows

- Some backend customization and tuning require direct vendor involvement

Cloud Data Security Platforms: Architecture and Deployment

How a platform scans your data is as important as what it finds. Sending production data to a third‑party cloud for analysis can introduce its own risk, and regulators increasingly expect clear answers on where data is processed.

Sentra: In‑Environment DSPM for Regulated and AI‑Ready Data

Sentra takes a data‑first, in‑environment approach:

- Agentless connectors to cloud provider APIs and SaaS platforms mean sensitive content is scanned inside your accounts; it is never copied to Sentra’s cloud.

- Lightweight on‑prem scanners extend coverage to file shares and databases, creating a unified view across IaaS, PaaS, SaaS, and on‑prem systems.

This design makes Sentra particularly attractive to organizations with strict data residency requirements and privacy‑driven governance models, especially in finance, healthcare, and other regulated sectors.

Wiz: Agentless CNAPP with Optional Runtime Sensors

Wiz is fundamentally agentless, connecting to cloud environments via APIs and leveraging temporary snapshots for inspection.

- An optional eBPF‑based sensor adds runtime visibility for workloads without introducing inline latency.

- The same security graph model underpins both infrastructure risk and emerging data/AI lineage features.

Prisma Cloud: Hybrid Agentless + Agent Model

Prisma Cloud combines:

- Agentless scanning for vulnerabilities, misconfigurations, and compliance posture.

- Optional agents or sidecars when deep runtime protection or granular workload telemetry is required.

This hybrid approach offers powerful coverage, but introduces more operational overhead than purely agentless DSPM platforms like Sentra and Cyera.

Cyera: In‑Place Cloud Data Inspection

Cyera focuses on in‑place data inspection, using local snapshots or direct connections to datastore APIs.

- Sensitive data is analyzed within your environment rather than being shipped to a vendor cloud.

- This aligns well with privacy‑first architectures that treat any external data processing as a risk to be minimized.

Identifying Toxic Combinations and Tracking Data Movement

Static discovery like, “here are your S3 buckets” is a basic capability. Real security value comes from correlating data sensitivity, effective access, and how data moves over time across clouds, regions, and environments.

Sentra: Data‑Aware Risk and End‑to‑End Data Flow Visibility

Sentra continuously maps your entire data estate, correlating classification results with IAM, ACLs, and sharing links to surface “toxic combinations” - high‑sensitivity data behind overly broad permissions.

- Tracks data movement across ETLs, database migrations, backups, and AI pipelines so you can see when production data drifts into dev, test, or unapproved regions.

- Extends beyond primary databases to cover data lakes, analytics platforms, and modern big‑data formats in object storage, which are increasingly used as AI training inputs.

This gives security and data teams a living map of where sensitive data actually lives and how it moves, not just a static list of storage locations.

Wiz: Security Graph and CIEM

Wiz’s Security Graph maps identities, resources, configurations, and data stores in one model.

- Its CIEM capabilities aggregate effective permissions (including inherited policies and group memberships) to highlight over‑exposed data resources.

- Wiz tracks data lineage into AI pipelines as part of its broader cloud risk view, helping teams understand where sensitive data intersects with ML workloads.

Prisma Cloud: Graph‑Based Attack Paths

Prisma Cloud uses a graph‑based risk engine to continuously simulate attack paths:

- Seemingly low‑risk misconfigurations and broad permissions are combined to identify chains that could expose regulated data.

- The platform generates near real‑time alerts when data crosses geofencing boundaries or flows into unapproved analytics or AI environments.

Cyera: AI‑Native Classification and LLM Validation

Cyera pairs AI‑native classification with access analysis:

- It continuously scans structured and unstructured data for sensitive content, mapping who and what can reach each dataset.

- An LLM‑based validation layer distinguishes real sensitive data from mock or synthetic data in dev/test, which can reduce false positives and cleanup noise.

AI Risk Detection: Shadow AI and Copilot Governance

Enterprise AI tools introduce a new class of risk: employees connecting business data to unauthorized models, or AI agents and copilots inheriting excessive access to legacy data.

Sentra: AI‑Ready Data Security and Copilot Guardrails

Sentra treats AI risk as a data problem:

- Tracks data flows between sources and destinations and compares them against an inventory of approved AI tools, flagging when sensitive data is routed to unauthorized LLMs or agents.

- For Microsoft 365 Copilot, Sentra builds a catalog of data across SharePoint, OneDrive, and Teams, mapping which users and groups can access each set of documents and providing guardrails before Copilot is widely rolled out.

This gives security teams a practical definition of AI data readiness: knowing exactly which data AI can see, and shrinking that blast radius before something goes wrong.

Cyera: AISPM and AI Runtime Protection

Cyera takes a dual‑layer approach to AI risk:

- AI Security Posture Management (AISPM) inventories sanctioned and unsanctioned AI tools and maps which sensitive datasets each can access.

- AI Runtime Protection monitors prompts, responses, and agent actions in real time, blocking suspicious activity such as data leakage or prompt‑injection attempts.

For M365 Copilot Studio, Cyera integrates with Microsoft Entra’s agent registry to track AI agents and their data scopes.

Wiz and Prisma Cloud: AI as Part of Data Lineage

Wiz and Prisma Cloud both treat AI as an extension of their data lineage and attack‑path capabilities:

- They track when sensitive data enters AI pipelines or training environments and how that intersects with misconfigurations and identity risk.

- However, they do not yet offer the same depth of AI‑specific governance controls and runtime protections as dedicated AI‑aware platforms like Sentra and Cyera.

Compliance Automation and Framework Mapping

For teams preparing for GDPR, HIPAA, PCI, SOC 2, or EU AI Act reviews, manually mapping findings to control sets and assembling evidence is slow and error‑prone.

Platform Approaches to Compliance

| Platform | Compliance Approach |

|---|---|

| Wiz | Maps cloud and workload findings to 100+ built-in frameworks (including GDPR, HIPAA, and the EU AI Act). |

| Prisma Cloud | Automates mapping to major frameworks’ control requirements with audit-ready documentation, often completing large assessments in minutes to under an hour. |

| Sentra | Focuses on regulated data visibility and privacy-driven governance; its in-environment DSPM, classification accuracy, and reporting are frequently cited by users as key to simplifying data-centric audit prep and proving control over sensitive data. Provides petabyte-scale assessments within hours and consolidated evidence for auditors. |

| Cyera | Provides real-time visibility and automated policy enforcement; supports compliance reporting, though public documentation is less explicit on automatic mapping to specific, named control sets. |

Sentra is especially compelling when audits hinge on where regulated data actually lives and how it is governed, rather than just infrastructure posture.

Choosing Among the Best Cloud Data Security Solutions

All four platforms address real, pressing needs—but they are not interchangeable.

- Choose Sentra if you need strict in‑environment data governance, high‑precision discovery across cloud, SaaS, and on‑prem, and AI‑aware guardrails that make Copilot and other AI deployments provably safer—without moving sensitive data out of your own infrastructure.

- Choose Wiz if your top priority is broad cloud security coverage and a unified graph for vulnerabilities, misconfigurations, identities, and data across multi‑cloud at scale.

- Choose Prisma Cloud if you want a code‑to‑cloud platform that ties data exposure to DevSecOps pipelines and workload runtime protection, and you have the resources to operationalize its breadth.

- Choose Cyera if you’re focused on AI‑native classification and a converged DLP + DSPM motion for large volumes of cloud data, and you’re prepared for a more involved integration phase.

For most mature security programs, the question isn’t whether to adopt these tools but how to layer them:

- A CNAPP for cloud infrastructure risk

- A DSPM platform like Sentra for data‑first visibility and AI readiness

- DLP/SSE for enforcement at egress and user edges

- Compliance automation to translate all of that into evidence your auditors, regulators, and board can trust

Taken together, this stack lets you move faster in the cloud and with AI, without losing control of the data that actually matters.

<blogcta-big>

How to Protect Sensitive Data in AWS

How to Protect Sensitive Data in AWS

Storing and processing sensitive data in the cloud introduces real risks, misconfigured buckets, over-permissive IAM roles, unencrypted databases, and logs that inadvertently capture PII. As cloud environments grow more complex in 2026, knowing how to protect sensitive data in AWS is a foundational requirement for any organization operating at scale. This guide breaks down the key AWS services, encryption strategies, and operational controls you need to build a layered defense around your most critical data assets.

How to Protect Sensitive Data in AWS (With Practical Examples)

Effective protection requires a layered, lifecycle-aware strategy. Here are the core controls to implement:

Field-Level and End-to-End Encryption

Rather than encrypting all data uniformly, use field-level encryption to target only sensitive fields, Social Security numbers, credit card details, while leaving non-sensitive data in plaintext. A practical approach: deploy Amazon CloudFront with a Lambda@Edge function that intercepts origin requests and encrypts designated JSON fields using RSA. AWS KMS manages the underlying keys, ensuring private keys stay secure and decryption is restricted to authorized services.

Encryption at Rest and in Transit

Enable default encryption on all storage assets, S3 buckets, EBS volumes, RDS databases. Use customer-managed keys (CMKs) in AWS KMS for granular control over key rotation and access policies. Enforce TLS across all service endpoints. Place databases in private subnets and restrict access through security groups, network ACLs, and VPC endpoints.

Strict IAM and Access Controls

Apply least privilege across all IAM roles. Use AWS IAM Access Analyzer to audit permissions and identify overly broad access. Where appropriate, integrate the AWS Encryption SDK with KMS for client-side encryption before data reaches any storage service.

Automated Compliance Enforcement

Use CloudFormation or Systems Manager to enforce encryption and access policies consistently. Centralize logging through CloudTrail and route findings to AWS Security Hub. This reduces the risk of shadow data and configuration drift that often leads to exposure.

What Is AWS Macie and How Does It Help Protect Sensitive Data?

AWS Macie is a managed security service that uses machine learning and pattern matching to discover, classify, and monitor sensitive data in Amazon S3. It continuously evaluates objects across your S3 inventory, detecting PII, financial data, PHI, and other regulated content without manual configuration per bucket.

Key capabilities:

- Generates findings with sensitivity scores and contextual labels for risk-based prioritization

- Integrates with AWS Security Hub and Amazon EventBridge for automated response workflows

- Can trigger Lambda functions to restrict public access the moment sensitive data is detected

- Provides continuous, auditable evidence of data discovery for GDPR, HIPAA, and PCI-DSS compliance

Understanding what sensitive data exposure looks like is the first step toward preventing it. Classifying data by sensitivity level lets you apply proportionate controls and limit blast radius if a breach occurs.

AWS Macie Pricing Breakdown

Macie offers a 30-day free trial covering up to 150 GB of automated discovery and bucket inventory. After that:

| Component | Cost |

|---|---|

| S3 bucket monitoring | $0.10 per bucket/month (prorated daily), up to 10,000 buckets |

| Automated discovery | $0.01 per 100,000 S3 objects/month + $1 per GB inspected beyond the first 1 GB |

| Targeted discovery jobs | $1 per GB inspected; standard S3 GET/LIST request costs apply separately |

For large environments, scope automated discovery to your highest-risk buckets first and use targeted jobs for periodic deep scans of lower-priority storage. This balances coverage with cost efficiency.

What Is AWS GuardDuty and How Does It Enhance Data Protection?

AWS GuardDuty is a managed threat detection service that continuously monitors CloudTrail events, VPC flow logs, and DNS logs. It uses machine learning, anomaly detection, and integrated threat intelligence to surface indicators of compromise.

What GuardDuty detects:

- Unusual API calls and atypical S3 access patterns

- Abnormal data exfiltration attempts

- Compromised credentials

- Multi-stage attack sequences correlated from isolated events

Findings and underlying log data are encrypted at rest using KMS and in transit via HTTPS. GuardDuty findings route to Security Hub or EventBridge for automated remediation, making it a key component of real-time data protection.

Using CloudWatch Data Protection Policies to Safeguard Sensitive Information

Applications frequently log more than intended, request payloads, error messages, and debug output can all contain sensitive data. CloudWatch Logs data protection policies automatically detect and mask sensitive information as log events are ingested, before storage.

How to Configure a Policy

- Create a JSON-formatted data protection policy for a specific log group or at the account level

- Specify data types to protect using over 100 managed data identifiers (SSNs, credit cards, emails, PHI)

- The policy applies pattern matching and ML in real time to audit or mask detected data

Important Operational Considerations

- Only users with the logs:Unmask IAM permission can view unmasked data

- Encrypt log groups containing sensitive data using AWS KMS for an additional layer

- Masking only applies to data ingested after a policy is active, existing log data remains unmasked

- Set up alarms on the LogEventsWithFindings metric and route findings to S3 or Kinesis Data Firehose for audit trails

Implement data protection policies at the point of log group creation rather than retroactively, this is the single most common mistake teams make with CloudWatch masking.

How Sentra Extends AWS Data Protection with Full Visibility

Native AWS tools like Macie, GuardDuty, and CloudWatch provide strong point-in-time controls, but they don't give you a unified view of how sensitive data moves across accounts, services, and regions. This is where minimizing your data attack surface requires a purpose-built platform.

What Sentra adds:

- Discovers and governs sensitive data at petabyte scale inside your own environment, data never leaves your control

- Maps how sensitive data moves across AWS services and identifies shadow and redundant/obsolete/trivial (ROT) data

- Enforces data-driven guardrails to prevent unauthorized AI access

- Typically reduces cloud storage costs by ~20% by eliminating data sprawl

Knowing how to protect sensitive data in AWS means combining the right services, KMS for key management, Macie for S3 discovery, GuardDuty for threat detection, CloudWatch policies for log masking, with consistent access controls, encryption at every layer, and continuous monitoring. No single tool is sufficient. The organizations that get this right treat data protection as an ongoing operational discipline: audit IAM policies regularly, enforce encryption by default, classify data before it proliferates, and ensure your logging pipeline never exposes what it was meant to record.

<blogcta-big>

How to Protect Sensitive Data in GCP

How to Protect Sensitive Data in GCP

Protecting sensitive data in Google Cloud Platform has become a critical priority for organizations navigating cloud security complexities in 2026. As enterprises migrate workloads and adopt AI-driven technologies, understanding how to protect sensitive data in GCP is essential for maintaining compliance, preventing breaches, and ensuring business continuity. Google Cloud offers a comprehensive suite of native security tools designed to discover, classify, and safeguard critical information assets.

Key GCP Data Protection Services You Should Use

Google Cloud Platform provides several core services specifically designed to protect sensitive data across your cloud environment:

- Cloud Key Management Service (Cloud KMS) enables you to create, manage, and control cryptographic keys for both software-based and hardware-backed encryption. Customer-Managed Encryption Keys (CMEK) give you enhanced control over the encryption lifecycle, ensuring data at rest and in transit remains secured under your direct oversight.

- Cloud Data Loss Prevention (DLP) API automatically scans data repositories to detect personally identifiable information (PII) and other regulated data types, then applies masking, redaction, or tokenization to minimize exposure risks.

- Secret Manager provides a centralized, auditable solution for managing API keys, passwords, and certificates, keeping secrets separate from application code while enforcing strict access controls.

- VPC Service Controls creates security perimeters around cloud resources, limiting data exfiltration even when accounts are compromised by containing sensitive data within defined trust boundaries.

Getting Started with Sensitive Data Protection in GCP

Implementing effective data protection begins with a clear strategy. Start by identifying and classifying your sensitive data using GCP's discovery and profiling tools available through the Cloud DLP API. These tools scan your resources and generate detailed profiles showing what types of sensitive information you're storing and where it resides.

Define the scope of protection needed based on your specific data types and regulatory requirements, whether handling healthcare records subject to HIPAA, financial data governed by PCI DSS, or personal information covered by GDPR. Configure your processing approach based on operational needs: use synchronous content inspection for immediate, in-memory processing, or asynchronous methods when scanning data in BigQuery or Cloud Storage.

Implement robust Identity and Access Management (IAM) practices with role-based access controls to ensure only authorized users can access sensitive data. Configure inspection jobs by selecting the infoTypes to scan for, setting up schedules, choosing appropriate processing methods, and determining where findings are stored.

Using Google DLP API to Discover and Classify Sensitive Data

The Google DLP API provides comprehensive capabilities for discovering, classifying, and protecting sensitive data across your GCP projects. Enable the DLP API in your Google Cloud project and configure it to scan data stored in Cloud Storage, BigQuery, and Datastore.

Inspection and Classification

Initiate inspection jobs either on demand using methods like InspectContent or CreateDlpJob, or schedule continuous monitoring using job triggers via CreateJobTrigger. The API automatically classifies detected content by matching data against predefined "info types" or custom criteria, assigning confidence scores to help you prioritize protection efforts. Reusable inspection templates enhance classification accuracy and consistency across multiple scans.

De-identification Techniques

Once sensitive data is identified, apply de-identification techniques to protect it:

- Masking (obscuring parts of the data)

- Redaction (completely removing sensitive segments)

- Tokenization

- Format-preserving encryption

These transformation techniques ensure that even if sensitive data is inadvertently exposed, it remains protected according to your organization's privacy and compliance requirements.

Preventing Data Loss in Google Cloud Environments

Preventing data loss requires a multi-layered approach combining discovery, inspection, transformation, and continuous monitoring. Begin with comprehensive data discovery using the DLP API to scan your data repositories. Define scan configurations specifying which resources and infoTypes to inspect and how frequently to perform scans. Leverage both synchronous and asynchronous inspection approaches. Synchronous methods provide immediate results using content.inspect requests, while asynchronous approaches using DlpJobs suit large-scale scanning operations. Apply transformation methods, including masking, redaction, tokenization, bucketing, and date shifting, to obfuscate sensitive details while maintaining data utility for legitimate business purposes.

Combine de-identification efforts with encryption for both data at rest and in transit. Embed DLP measures into your overall security framework by integrating with role-based access controls, audit logging, and continuous monitoring. Automate these practices using the Cloud DLP API to connect inspection results with other services for streamlined policy enforcement.

Applying Data Loss Prevention in Google Workspace for GCP Workloads

Organizations using both Google Workspace and GCP can create a unified security framework by extending DLP policies across both environments. In the Google Workspace Admin console, create custom rules that detect sensitive patterns in emails, documents, and other content. These policies trigger actions like blocking sharing, issuing warnings, or notifying administrators when sensitive content is detected.

Google Workspace DLP automatically inspects content within Gmail, Drive, and Docs for data patterns matching your DLP rules. Extend this protection to your GCP workloads by integrating with Cloud DLP, feeding findings from Google Workspace into Cloud Logging, Pub/Sub, or other GCP services. This creates a consistent detection and remediation framework across your entire cloud environment, ensuring data is safeguarded both at its source and as it flows into or is processed within your Google Cloud Platform workloads.

Enhancing GCP Data Protection with Advanced Security Platforms

While GCP's native security services provide robust foundational protection, many organizations require additional capabilities to address the complexities of modern cloud and AI environments. Sentra is a cloud-native data security platform that discovers and governs sensitive data at petabyte scale inside your own environment, ensuring data never leaves your control. The platform provides complete visibility into where sensitive data lives, how it moves, and who can access it, while enforcing strict data-driven guardrails.

Sentra's in-environment architecture maps how data moves and prevents unauthorized AI access, helping enterprises securely adopt AI technologies. The platform eliminates shadow and ROT (redundant, obsolete, trivial) data, which not only secures your organization for the AI era but typically reduces cloud storage costs by approximately 20 percent. Learn more about securing sensitive data in Google Cloud with advanced data security approaches.

Understanding GCP Sensitive Data Protection Pricing

GCP Sensitive Data Protection operates on a consumption-based, pay-as-you-go pricing model. Your costs reflect the actual amount of data you scan and process, as well as the number of operations performed. When estimating your budget, consider several key factors:

| Cost Factor | Impact on Pricing |

|---|---|

| Data Volume | Primary cost driver; larger datasets or more frequent scans lead to higher bills |

| Operation Frequency | Continuous scanning with detailed detection policies generates more processing activity |

| Feature Complexity | Specific features and policies enabled can add to processing requirements |

| Associated Resources | Network or storage fees may accumulate when data processing integrates with other services |

To better manage spending, estimate your expected data volume and scan frequency upfront. Apply selective scanning or filtering techniques, such as scanning only changed data or using file filters to focus on high-risk repositories. Utilize Google's pricing calculator along with cost monitoring dashboards and budget alerts to track actual usage against projections. For organizations concerned about how sensitive cloud data gets exposed, investing in proper DLP configuration can prevent costly breaches that far exceed the operational costs of protection services.

Successfully protecting sensitive data in GCP requires a comprehensive approach combining native Google Cloud services with strategic implementation and ongoing governance. By leveraging Cloud KMS for encryption management, the Cloud DLP API for discovery and classification, Secret Manager for credential protection, and VPC Service Controls for network segmentation, organizations can build robust defenses against data exposure and loss.

The key to effective implementation lies in developing a clear data protection strategy, automating inspection and remediation workflows, and continuously monitoring your environment as it evolves. For organizations handling sensitive data at scale or preparing for AI adoption, exploring additional GCP security tools and advanced platforms can provide the comprehensive visibility and control needed to meet both security and compliance objectives. As cloud environments grow more complex in 2026 and beyond, understanding how to protect sensitive data in GCP remains an essential capability for maintaining trust, meeting regulatory requirements, and enabling secure innovation.

<blogcta-big>

7 Data Loss Prevention Best Practices to Cut False Positives and Blind Spots

7 Data Loss Prevention Best Practices to Cut False Positives and Blind Spots

Most security leaders aren’t asking for “more DLP.” They’re asking why the DLP they already own is noisy, brittle, and still misses real risk. You turn on endpoint, email, and network DLP. You import PCI and PII templates. Within weeks, users complain that normal work is blocked, so policies get relaxed or disabled. Analysts drown in meaningless alerts. Meanwhile, you know there are blind spots in SaaS, cloud data stores, and AI tools that DLP never sees.

The problem usually isn’t that you bought the “wrong” DLP. It’s that DLP is doing too much on its own: trying to discover sensitive data, understand business context, and enforce policies in one step. To improve the functioning of your DLP, you have to separate those responsibilities and give DLP the data intelligence it has always been missing.

This guide walks through seven data loss prevention best practices that:

- Cut DLP false positives and alert fatigue

- Close blind spots across SaaS, cloud, and AI

- Show how to use Data Security Posture Management (DSPM) alongside DLP instead of treating them as competitors

1. Start with a specific DLP problem, not a vague mandate

Many DLP programs are born from a broad requirement like “prevent data loss” or “achieve compliance.” That sounds reasonable, but it’s too fuzzy to drive design decisions. If everything is “data loss,” every event looks important and tuning turns into guesswork. Instead, define one or two sharp, testable problems to solve in the next 90 days.

For example:

- Reduce DLP false positives by 50% while maintaining coverage across email and collaboration tools.

- Eliminate unknown PHI exposures in Microsoft 365 and Google Workspace before the next HIPAA audit.

- Stop real customer data from leaking into lower environments and AI training pipelines.

Once you frame the goal concretely, a few things fall into place. You know what to measure (false-positive rate, blind-spot coverage, number of mis‑labeled data stores). You can see which parts are posture problems (where data lives, how it’s labeled, who can touch it) and which are pure enforcement. And you have a clear way to tell whether the program is actually improving, rather than just “having DLP turned on.” In short, give your DLP initiative a narrow, measurable purpose before you touch any rules.

2. Fix classification before you tune DLP rules

Almost every struggling DLP deployment eventually discovers the same truth: it doesn’t really have a DLP problem, it has a classification problem. Traditional DLP leans heavily on pattern matching and static dictionaries. In modern environments, that leads to constant mistakes:

- Internal IDs or ticket numbers mistaken for card data or SSNs

- Highly sensitive business documents missed because they don’t match canned patterns

- Each product (endpoint DLP, email DLP, CASB) trying to re‑implement classification in its own silo

This is exactly the gap DSPM is designed to fill. A platform like Sentra DSPM continuously:

- Discovers sensitive data at scale across cloud, SaaS, data warehouses, on‑prem stores, and AI pipelines, without copying it out of your environment

- Classifies that data using multi‑signal, AI‑driven models that combine entity‑level signals (PII, PCI, PHI fields, secrets) with file‑level semantics (document type, business function, domain)

- Labels assets consistently, for example, by auto‑applying Microsoft Purview Information Protection (MPIP) labels that downstream tools, including DLP, can consume

Once you trust the labels, DLP can stop trying to “guess” sensitivity from raw content and location. Policies get simpler and more stable because they key off well‑defined labels instead of brittle regular expressions.

Best practice: before you tweak another DLP rule, invest in getting classification right with DSPM, then let DLP enforce on the resulting labels.

3. Reduce DLP false positives with labels and context

“Reduce DLP false positives” is one of the most common reasons security teams revisit their DLP strategy. Most false positives come from two root causes:

- Over‑broad content rules that match anything vaguely sensitive

- Lack of business context like; who the user is, which system they’re in, where the data is going, and whether that’s normal behavior

The first step is to move to label‑driven policies wherever possible. Instead of “block anything that looks like a credit card number,” write rules like “block sending files labeled PCI to personal email domains” or “quarantine emails with PHI labels sent outside approved partners.” DSPM plus accurate labeling makes that possible at scale.

The second step is to bring in more context. A file labeled Confidential going to a known external auditor is very different from that same file going to a new personal Dropbox account at 2 a.m.

When you combine labels with:

- Identity and role

- Channel (email, web, SaaS, AI)

- Destination and geography

- Simple behavior analytics (volume, unusual time, unusual location)

You can reserve hard blocks and escalations for situations that actually look risky.

Finally, you need a real feedback loop. Let users override certain DLP prompts with a required justification and log “reported false positives.” Review those regularly with business owners. That feedback is invaluable for tightening rules where they truly matter and relaxing them where they are just creating friction. In practice, enforce on labels first, then refine with business context and user feedback, instead of trying to make regexes infinitely smarter.

4. Treat DSPM and DLP as a single system, not a “DSPM vs DLP” choice

If you search for “DSPM vs DLP,” you’ll find plenty of comparison articles and vendor takes. From the customer’s side, though, the most useful framing is not “which one?” but “what does each do, and how do they work together?”

At a high level:

- DSPM focuses on data-at-rest intelligence: it shows what sensitive data you have, where it resides, who and what can access it, how it’s configured, and whether that posture is acceptable for your risk and compliance requirements.

- DLP focuses on data-in-motion enforcement: it monitors data leaving (or moving within) the organization via email, endpoints, web, SaaS, and APIs, and decides what to block, encrypt, or just log based on policies.

When you connect them, you get a closed loop:

- DSPM discovers, classifies, and labels sensitive data consistently across cloud, SaaS, on‑prem, and AI.

- Data access governance uses that context to right‑size permissions and remediate over‑exposure.

- DLP and related controls enforce label‑driven policies at the edges, with far fewer false positives and blind spots.

DSPM doesn’t replace DLP; it makes DLP accurate, scalable, and cloud/AI‑ready. Takeaway, stop framing it as DSPM versus DLP. Your DLP will only be as good as the DSPM feeding it.

5. Bring SaaS, cloud, and AI into scope for DLP

Most older DLP programs were built around email and endpoints. But in cloud‑first organizations, the riskiest data flows now run through:

- Cloud and object storage (S3, GCS, Azure Blob)

- Data warehouses and lakes (Snowflake, BigQuery, Databricks)

- SaaS platforms (M365, Google Workspace, Box, Salesforce, Slack, Teams)

- AI systems (M365 Copilot, Gemini for GWS, Bedrock, custom RAG apps)

Trying to bolt classic inline DLP controls onto all of those surfaces is expensive and incomplete. You’ll still miss shadow data, lower environments that contain real customer data, and AI pipelines that consume sensitive content by design.

DSPM gives you a more scalable pattern:

- Inventory and classify sensitive data where it sits across cloud, SaaS, and AI.

- Use that intelligence to drive native controls: MPIP labels and Microsoft Purview DLP, CASB/SSE policies, Snowflake dynamic masking, IAM/CIEM, and AI guardrails.

For example, a healthcare organization might combine:

- Sentra’s DSPM to discover PHI in Google Drive, M365, Salesforce, and Snowflake

- Auto‑labeling of that PHI so Purview and DLP can enforce correctly

- AI‑aware classification to govern which labeled data copilots and agents are allowed to see

See How Valenz Health Uses DSPM to Protect PHI Across AWS, Azure, and Modern Data Platforms

Similarly, the DLP for Google Workspace story shows how cloud‑native, DSPM‑powered classification is essential to make platform DLP effective for unstructured content in OneDrive, SharePoint, and Teams. Best practice, treat SaaS, cloud, and AI as first‑class DLP surfaces, and use DSPM to make them visible and governable before you try to enforce.

6. Design DLP policies for real workflows, then harden them

Many DLP programs fail not because the tools are weak, but because the policies were designed for whiteboards, not for real users.

Very often:

- The ruleset is too broad, with dozens of overlapping controls per channel

- Business stakeholders had little input, so workflows break in production

- There’s no staged rollout path; policies jump straight from “off” to “block”

A better pattern is to treat DLP policies as something you product‑manage. Start by expressing a very small set of core policies in business terms, independent of channel.

For example:

- “Regulated data (PII, PCI, PHI) must not leave specific regions or approved partners.”

- “Files labeled Highly Confidential must never be shared to personal email or cloud domains.”

- “AI assistants and copilots may only access data labeled Internal or below.”

Then map those policies onto channels with graduated responses:

- Log only (for simulation and tuning)

- User prompts (“This file is labeled Confidential; are you sure?”)

- Override with justification (captured for review)

- Hard block + ticket for the riskiest conditions

Throughout, involve legal, compliance, HR, and business owners. If DLP events could lead to performance conversations or disciplinary action, you don’t want those stakeholders to be surprised by how the system behaves.

Ready to get started? Read: How to Build a Modern DLP Strategy That Actually Works: DSPM + Endpoint + Cloud DLP

Key idea, roll out label‑driven policies gently, let reality teach you where controls can be strict, and only then lock them down.

7. Measure DLP like a product, not a checkbox

If your goal is to “supercharge DLP so it performs better,” you need to know how it’s performing now, and how changes affect it. That means treating DLP like a product with KPIs, not a compliance box you either have or don’t.

High‑performing teams tend to track four categories:

- Coverage: percentage of data stores under DSPM visibility; proportion of sensitive assets correctly labeled; number of major SaaS and cloud platforms within scope.

- Quality: false positive and false negative rates by policy and channel; serious incidents discovered outside DLP that should have triggered it.

- Operational impact: mean time to detect and respond to data‑loss incidents; analyst hours spent per week on DLP triage; number of issues auto‑remediated via workflows (auto‑labeling, auto‑revoking access, auto‑quarantining content).

- Business alignment: frequency of stakeholder requests to disable or bypass policies; time to prepare for audits compared to prior years.

A platform like Sentra’s data security platform gives you much of this telemetry out of the box through its unified inventory, access graph, and integration hooks into SIEM/SOAR, IAM, DLP, SSE/CASB, and ITSM. Bottom line, you can’t fix what you can’t measure. Decide which DLP metrics matter to your organization and revisit them as you evolve your DSPM + DLP architecture.

What “Supercharge Your DLP” means in practice

When teams say “we need to fix our DLP,” they usually don’t mean “rip everything out.” They mean:

- “We don’t trust the alerts we get.”

- “We know there are blind spots in cloud, SaaS, and AI.”

- “We’re tired of fighting with brittle rules that don’t reflect how the business actually works.”

Supercharging DLP in the cloud and AI era starts with data intelligence. That means:

- Using DSPM to discover and classify sensitive data everywhere

- Applying consistent labels that encode business meaning

- Wiring those labels into the DLP and access controls you already own

From there, DLP can finally do what it was always meant to do: prevent real data loss, at scale, without paralyzing your organization or your AI initiatives. That’s the real promise behind “Supercharge Your DLP.” You don’t start over, you make the DLP you already have smarter, quieter where it should be, and louder where it counts.

<blogcta-big>

SOC 2 Without the Spreadsheet Chaos: Automating Evidence for Regulated Data Controls

SOC 2 Without the Spreadsheet Chaos: Automating Evidence for Regulated Data Controls

SOC 2 has become table stakes for cloud‑native and SaaS organizations. But for many security and GRC teams, each SOC 2 cycle still feels like starting from scratch; hunting for the latest access reviews, exporting encryption settings from multiple consoles, proving backups and logs exist - per data set, per environment. If your SOC 2 evidence process is still a patchwork of spreadsheets and screenshots, you’re not alone. The missing piece is a data‑centric view of your controls, especially around regulated data.

Why SOC 2 Evidence Is So Hard in Cloud and SaaS Environments

Under SOC 2, trust service criteria like Security, Availability, and Confidentiality translate into specific expectations around data:

Is sensitive or regulated data discovered and classified consistently?

Are core controls (encryption, backup, access, logging) actually in place where that data lives?

Can you show continuous monitoring instead of point‑in‑time screenshots?

In a typical multi‑cloud/SaaS environment:

- Sensitive data is scattered across S3, databases, Snowflake, M365/Google Workspace, Salesforce, and more.

- Different teams own pieces of the puzzle (infra, security, data, app owners).

- Legacy tools are siloed by layer (CSPM for infra, DLP for traffic, privacy catalog for RoPA).

So when SOC 2 comes around, you spend weeks assembling a story instead of being able to show a trusted, provable compliance posture at the data layer.

The Data‑First Approach to SOC 2 Evidence

Instead of treating SOC 2 as a separate project, leading teams are aligning it with their data security posture management (DSPM) strategy:

- Start from the data, not from the infrastructure

- Build a unified inventory of sensitive and regulated data across IaaS, PaaS, SaaS, and on‑prem.

- Enrich each store with sensitivity, residency, and business context.

- Attach control posture to each data store

- For each regulated data store, track encryption status, backup configuration, access model, and logging/monitoring coverage as posture attributes.

- Generate SOC‑aligned evidence from the same system

- Use the regulated‑data inventory plus posture engine to produce SOC 2‑friendly reports and CSVs, rather than collecting evidence manually for each audit cycle.

This is exactly the pattern that modern data security platforms like Sentra are implementing.

How Sentra Helps Security and GRC Teams Automate SOC 2 Evidence

Sentra sits across your data estate and focuses on regulated data, with capabilities that map directly onto SOC 2 evidence needs:

Comprehensive data‑store discovery and classification

Agentless discovery of data stores (managed and unmanaged) across multi‑cloud and on‑prem, combined with high‑accuracy classification for regulated and business‑critical data.

Data‑centric security posture

For each store, Sentra tracks security properties—including encryption, backup, logging, and access configuration, and surfaces gaps where sensitive data is insufficiently protected.

Framework‑aligned reporting

SOC 2 and other frameworks can be represented as report templates that pull directly from Sentra’s inventory and posture attributes, giving GRC teams “audit‑ready” exports without rebuilding evidence from scratch.

The result is you can prove control over regulated data, for SOC 2 and beyond, with far less manual overhead.

Mapping SOC 2 Criteria to Data‑Level Evidence

Here’s how a data‑first posture shows up in SOC 2:

CC6.x (Logical and Physical Access Controls)

Evidence: Identity‑to‑data mapping showing which users/roles can access which sensitive datasets across cloud and SaaS.

CC7.x (Change Management / Monitoring)

Evidence: Data Detection & Response (DDR) signals and anomaly analytics around access to crown‑jewel data; logs that tie back to sensitive data stores.

CC8.x (Risk Mitigation)

Evidence: Risk‑prioritized view of data stores based on sensitivity and missing controls, plus remediation workflows or automatic labeling/tagging to tighten upstream policies.

When this data‑level view is in place, SOC 2 becomes evidence selection rather than evidence construction.

A Repeatable SOC 2 Playbook for Security, GRC, and Privacy

To operationalize this approach, many teams follow a recurring pattern:

- Define a “regulated data perimeter” for SOC 2: Identify which clouds, SaaS platforms, and on‑prem stores contain in‑scope data (PII, PHI, PCI, financial records).

- Instrument with DSPM: Deploy a data security platform like Sentra to discover, classify, and map access to that data perimeter.

- Connect GRC to the same source of truth: Have GRC and privacy teams pull their SOC 2 evidence from the same inventory and posture views Security uses for day‑to‑day risk management.

- Continuously refine controls: Use posture and DDR insights to reduce exposure, close misconfigurations, and improve your next SOC 2 cycle before it starts.

The more you lean on a shared, data‑centric foundation, the easier it becomes to maintain a trusted, provable compliance posture across frameworks, not just SOC 2.

Turning SOC 2 From a Project Into a Capability

Ultimately, the goal is to stop treating SOC 2 as a once-a-year project and start treating it as an ongoing capability embedded into how your organization operates. Security, GRC, and privacy teams should work from a single, unified view of regulated data and controls. Evidence should always be a few clicks away - not the result of a month-long scramble. And every audit should strengthen your data security posture, not distract from it. If you’re still managing compliance in spreadsheets, it’s worth asking what it would take to make your SOC 2 posture something you can prove on demand.

Ready to end the fire drills and move to continuous compliance? Book a Demo

<blogcta-big>

DSPM Dirty Little Secrets: What Vendors Don’t Want You to Test

DSPM Dirty Little Secrets: What Vendors Don’t Want You to Test

Discover What DSPM Vendors Try to Hide

Your goal in running a data security/DSPM POV is to evaluate all important performance and cost parameters so you can make the best decision and avoid unpleasant surprises. Vendors, on the other hand, are looking for a ‘quick win’ and will often suggest shortcuts like using a limited test data set and copying your data to their environment.

On the surface this might sound like a reasonable approach, but if you don’t test real data types and volumes in your own environment, the POV process may hide costly failures or compliance violations that will quickly become apparent in production. A recent evaluation of Sentra versus another top emerging DSPM exposed how the other solution’s performance dropped and costs skyrocketed when deployed at petabyte scale. Worse, the emerging DSPM removed data from the customer environment - a clear controls violation.

If you want to run a successful POV and avoid DSPM buyers' remorse you need to look out for these "dirty little secrets".

Dirty Little Secret #1:

‘Start small’ can mean ‘fails at scale’

The biggest 'dirty secret' is that scalability limits are hidden behind the 'start small' suggestion. Many DSPM platforms cannot scale to modern petabyte-sized data environments. Vendors try to conceal this architectural weakness by encouraging small, tightly scoped POVs that never stress the system and create false confidence. Upon broad deployment, this weakness is quickly exposed as scans slow and refresh cycles stretch, forcing teams to drastically reduce scope or frequency. This failure is fundamentally architectural, lacking parallel orchestration and elastic execution, proving that the 'start small' advice was a deliberate tactic to avoid exposing the platform’s inevitable bottleneck.In a recent POV, Sentra successfully scanned 10x more data in approximately the same time than the alternative:

Dirty Little Secret #2:

High cloud cost breaks continuous security