Dean Taler

Dean is a Software Engineer at Sentra, specializing in backend development and big data technologies. With experience in building scalable micro-services and data pipelines using Python, Kafka, and Kubernetes, he focuses on creating robust, maintainable systems that support innovation at scale.

Name's Data Security Posts

Archive Scanning for Cloud Data Security: Stop Ignoring Compressed Files

Archive Scanning for Cloud Data Security: Stop Ignoring Compressed Files

If you care about cloud data security, you cannot afford to treat compressed files as opaque blobs. Archive scanning for cloud data security is no longer a nice‑to‑have — it’s a prerequisite for any credible data security posture.

Every environment I’ve seen at scale looks the same: thousands of ZIP files in S3 buckets, TAR.GZ backups in Azure Blob, JARs and DEBs in artifact repositories, and old GZIP‑compressed database dumps nobody remembers creating. These archives are the digital equivalent of sealed boxes in a warehouse. Most tools walk right past them.

Attackers don’t.

Archives: Where Sensitive Data Goes to Disappear

Think about how your teams actually use compressed files:

- An engineer zips up a project directory — complete with .env files and API keys — and uploads it to shared storage.

- A DBA compresses a production database backup holding millions of customer records and drops it into an internal bucket.

- A departing employee packs a folder of financial reports into a RAR file and moves it to a personal account.

None of this is hypothetical. It happens every day, and it creates a perfect hiding place for:

- Bulk data exfiltration – a single ZIP can contain thousands of PII‑rich documents, financial reports, or IP.

- Nested archives – ZIP‑inside‑ZIP‑inside‑TAR.GZ is normal in automated build and backup pipelines. One‑layer scanners never see what’s inside.

- Password‑protected archives – if your tool silently skips encrypted ZIPs, you’re ignoring what could be the highest‑risk file in your environment.

- Software artifacts with secrets – JARs and DEBs often carry config files with embedded credentials and tokens.

- Old backups – that three‑year‑old compressed backup may contain an unmasked database nobody has reviewed since it was created.

If your data security platform cannot see inside compressed files, you don’t actually have end‑to‑end data visibility. Full stop.

Why Archive Scanning for Cloud Data Security Is Hard

The problem isn’t just volume — it’s structure and diversity.

Real cloud environments contain:

- ZIP / JAR / CSZ

- RAR (including multi‑part R00/R01 sets)

- 7Z

- TAR and TAR.GZ / TAR.BZ2 / TAR.XZ

- Standalone compression formats like GZIP, BZ2, XZ/LZMA, LZ4, ZLIB

- Package formats like DEB that are themselves layered archives

Most legacy tools treat all of this as “a file with an unknown blob of bytes.” At best, they record that the archive exists. They don’t recursively extract layers, don’t traverse internal structures, and don’t feed the inner files back into the same classification engine they use for documents or databases.

That gap becomes larger every quarter, as more data gets compressed to save money and speed up transfer.

How Sentra Does Archive Scanning All the Way Down

In Sentra, we treat archives and compressed files as first‑class citizens in the parsing and classification pipeline.

Full Archive and Compression Format Coverage

Our archive scanning engine supports the full range of formats we see in real‑world cloud workloads:

- ZIP (including JAR and CSZ)

- RAR (including multi‑part sets)

- 7Z

- TAR

- GZ / GZIP

- BZ2

- XZ / LZMA

- LZ4

- ZLIB / ZZ

- DEB and other layered package formats

Each reader is implemented as a composite reader. When Sentra encounters an archive, we don’t just log its presence. We:

- Open the archive.

- Iterate every entry.

- Hand each inner file back into the global parsing pipeline.

- If the inner file is itself an archive, we repeat the process until there are no more layers.

A TAR.GZ containing a ZIP containing a CSV with customer records is not an edge case. It’s Tuesday. Sentra will find the CSV and classify the records correctly.

Encryption Detection Without Decryption

Password‑protected archives are dangerous precisely because they’re opaque.

When Sentra hits an encrypted ZIP or RAR, we don’t shrug and move on. We detect encryption by inspecting archive metadata and entry‑level flags, then surface:

- That the archive is encrypted

- Where it lives

- How large it is

We don’t attempt to brute‑force passwords or exfiltrate content. But we do make encrypted archives visible so they can be governed: flagged as high‑risk, pulled into investigations, or subject to separate key‑management policies.

Intelligent File Prioritization Inside Archives

Not every file inside an archive has the same risk profile. A tarball full of binaries and images is very different from one full of CSVs and PDFs.

Sentra implements file‑type–aware prioritization inside archives. We scan high‑value targets first — formats associated with PII, PCI, PHI, or sensitive business data — before we get to low‑risk assets.

This matters when you’re scanning multi‑gigabyte archives under time or budget constraints. You want the most important findings first, not after you’ve chewed through 40,000 icons and object files.

In‑Memory Processing for Security and Speed

All archive processing in Sentra happens in memory. We don’t unpack archives to temporary disk locations or leave extracted debris lying around in scratch directories.

That gives you two benefits:

- Performance – we avoid disk I/O overhead when dealing with massive archives.

- Security – we don’t create yet another copy of the sensitive data you’re trying to control.

For a data security platform, that design choice is non‑negotiable.

Compliance: Auditors Don’t Accept “We Skipped the Zips”

Regulations like GDPR, CCPA, HIPAA, and PCI DSS don’t carve out exceptions for compressed files. If personal health information is sitting in a GZIP’d database dump in S3, or cardholder data is archived in a ZIP on a shared drive, you are still accountable.

Auditors won’t accept “we scanned everything except the compressed files” as a defensible position.

Sentra’s archive scanning closes this gap. Across major cloud providers and archive formats, we give you end‑to‑end visibility into compressed and archived data — recursively, intelligently, and without blind spots.

Because the most dangerous data exposure in your cloud is often the one hiding a single ZIP file deep.

<blogcta-big>

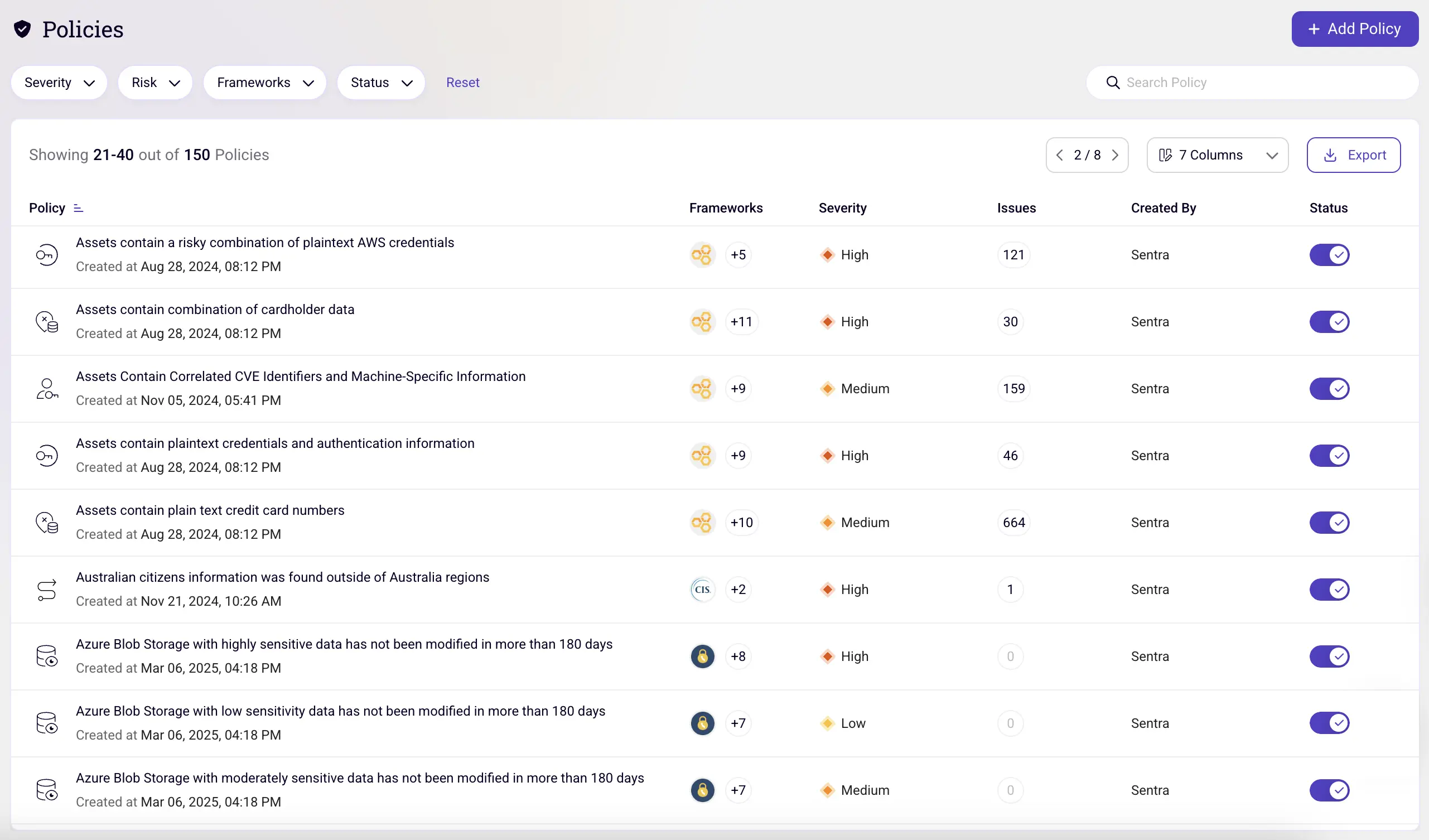

Building Automated Data Security Policies for 2026: What Security Teams Need Now

Building Automated Data Security Policies for 2026: What Security Teams Need Now

Learn how to build automated data security policies that reduce data exposure, meet GDPR, PCI DSS, and HIPAA requirements, and scale data governance across cloud, SaaS, and AI-driven environments as organizations move into 2026.

As 2025 comes to a close, one reality is clear: automated data security and governance programs are a must-have to truly leverage data and AI. Sensitive data now moves faster than human review can keep up with. It flows across multi-cloud storage, SaaS platforms, collaboration tools, logging pipelines, backups, and increasingly, AI and analytics workflows that continuously replicate data into new locations. For security and compliance teams heading into 2026, periodic audits and static policies are no longer sufficient. Regulators, customers, and boards now expect continuous visibility and enforcement.

This is why automated data security policies have become a foundational control, not a “nice to have.”

In this blog, we focus on how data security policies are actually used at the end of 2025, and how to design them so they remain effective in 2026.

You’ll learn:

- The most important compliance and risk-driven policy use cases

- How organizations operationalize data security policies at scale

- Practical examples aligned with GDPR, PCI DSS, HIPAA, and internal governance

Why Automated Data Security Policies Matter Heading into 2026

The direction of regulatory enforcement and threat activity is consistent:

- Continuous compliance is now expected, not implied

- Overexposed data is increasingly used for extortion, not just theft

- Organizations must prove they know where sensitive data lives and who can access it

Recent enforcement actions have shown that organizations can face penalties even without a breach, simply for storing regulated data in unapproved locations or failing to enforce access controls consistently.

Automated data security policies address this gap by continuously evaluating:

- Data sensitivity

- Access scope

- Storage location and residency

- surfacing violations in near real time.

Three Data Security Policy Use Cases That Deliver Immediate Value

As organizations prepare for 2026, most start with policies that reduce data exposure quickly.

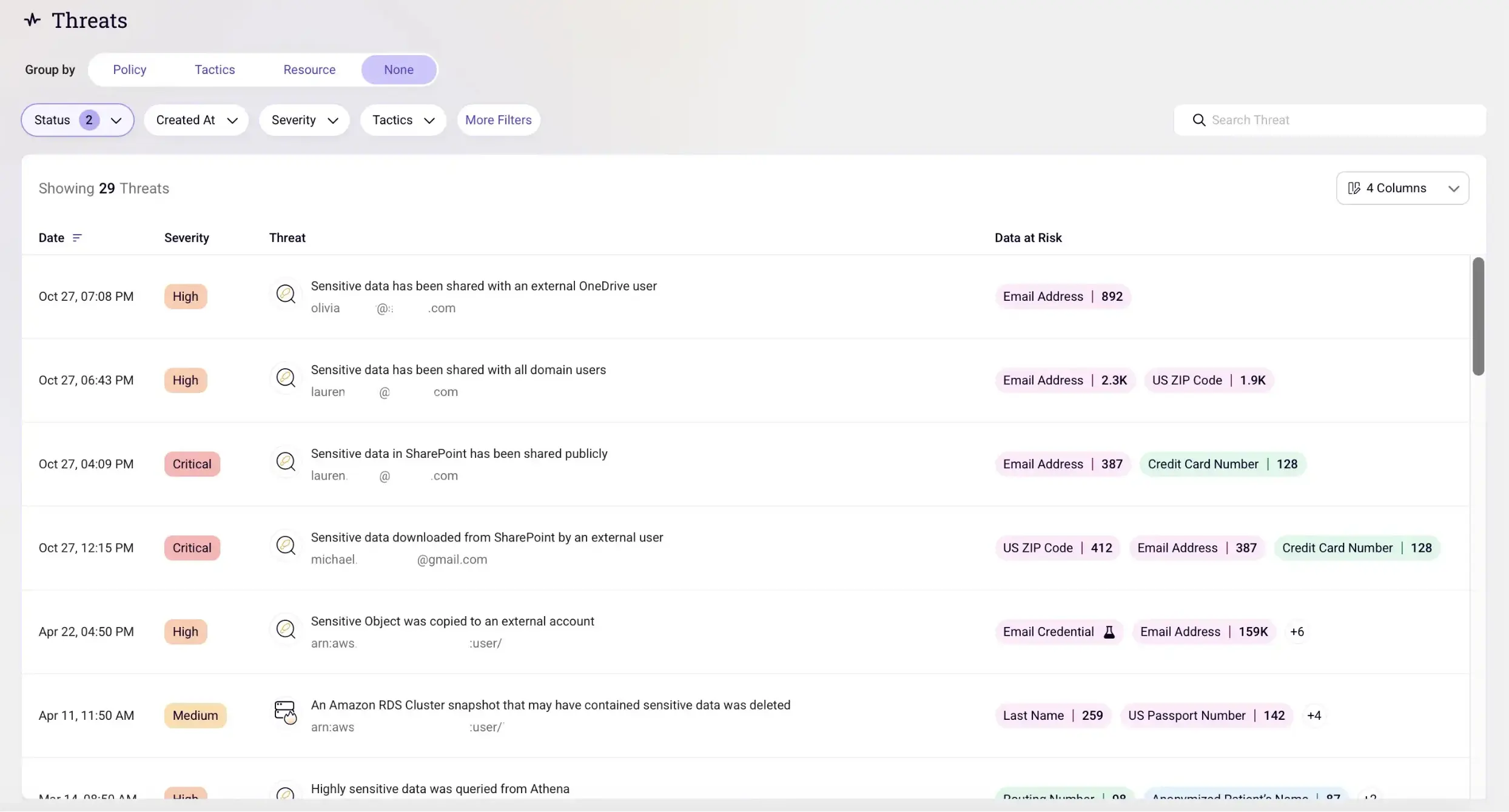

1. Limiting Data Exposure and Ransomware Impact

Misconfigured access and excessive sharing remain the most common causes of data exposure. In cloud and SaaS environments, these issues often emerge gradually, and go unnoticed without automation.

High-impact policies include:

- Sensitive data shared with external users: Detect files containing credentials, PII, or financial data that are accessible to outside collaborators.

- Overly broad internal access to sensitive data: Identify data shared with “Anyone in the organization,” significantly increasing exposure during account compromise.

These policies reduce blast radius and help prevent data from becoming leverage in extortion-based attacks.

2. Enforcing Secure Data Storage and Handling (PCI DSS, HIPAA, SOC 2)

Compliance violations in 2025 rarely result from intentional misuse. They happen because sensitive data quietly appears in the wrong systems.

Common policy findings include:

- Payment card data in application logs or monitoring tools: A persistent PCI DSS issue, especially in modern microservice environments.

- Employee or patient records stored in collaboration platforms: PII and PHI often end up in user-managed drives without appropriate safeguards.

Automated policies continuously detect these conditions and support fast remediation, reducing audit findings and operational risk.

3. Maintaining Data Residency and Sovereignty Compliance

As global data protection enforcement intensifies, data residency violations remain one of the most common and costly compliance failures.

Automated policies help identify:

- EU personal data stored outside approved EU regions: A direct GDPR violation that is common in multi-cloud and SaaS environments.

- Cross-region replicas and backups containing regulated data: Secondary storage locations frequently fall outside compliance controls.

These policies enable organizations to demonstrate ongoing compliance, not just point-in-time alignment.

What Modern Data Security Policies Must Do (2026-Ready)

As teams move into 2026, effective data security policies share three traits:

- They are data-aware: Policies are based on data sensitivity - not just resource labels or storage locations.

- They operate continuously: Policies evaluate changes as data is created, moved, shared, or copied into new systems.

- They drive action: Every violation maps to a remediation path: restrict access, move data, or delete it.

This is what allows security teams to scale governance without slowing the business.

Conclusion: From Static Rules to Continuous Data Governance

Heading into 2026, automated data security policies are no longer just compliance tooling, they are a core layer of modern security architecture.

They allow organizations to:

- Reduce exposure and ransomware risk

- Enforce regulatory requirements continuously

- Govern sensitive data across cloud, SaaS, and AI workflows

Most importantly, they replace reactive audits with real-time data governance.

Organizations that invest in automated, data-aware security policies today will enter 2026 better prepared for regulatory scrutiny, evolving threats, and the continued growth of their data footprint.

<blogcta-big>

Real-Time Data Threat Detection: How Organizations Protect Sensitive Data

Real-Time Data Threat Detection: How Organizations Protect Sensitive Data

Real-time data threat detection is the continuous monitoring of data access, movement, and behavior to identify and stop security threats as they occur. In 2026, this capability is essential as sensitive data flows across hybrid cloud environments, AI pipelines, and complex multi-platform architectures.

As organizations adopt AI technologies at scale, real-time data threat detection has evolved from a reactive security measure into a proactive, intelligence-driven discipline. Modern systems continuously monitor data movement and access patterns to identify emerging vulnerabilities before sensitive information is compromised, helping organizations maintain security posture, ensure compliance, and safeguard business continuity.

These systems leverage artificial intelligence, behavioral analytics, and continuous monitoring to establish baselines of normal behavior across vast data estates. Rather than relying solely on known attack signatures, they detect subtle anomalies that signal emerging risks, including unauthorized data exfiltration and shadow AI usage.

How Real-Time Data Threat Detection Software Works

Real-time data threat detection software operates by continuously analyzing activity across cloud platforms, endpoints, networks, and data repositories to identify high-risk behavior as it happens. Rather than relying on static rules alone, these systems correlate signals from multiple sources to build a unified view of data activity across the environment.

A key capability of modern detection platforms is behavioral modeling at scale. By establishing baselines for users, applications, and systems, the software can identify deviations such as unexpected access patterns, irregular data transfers, or activity from unusual locations. These anomalies are evaluated in real time using artificial intelligence, machine learning, and predefined policies to determine potential security risk.

What differentiates modern real-time data threat detection software is its ability to operate at petabyte scale without requiring sensitive data to be moved or duplicated. In-place scanning preserves performance and privacy while enabling comprehensive visibility. Automated response mechanisms allow security teams to contain threats quickly, reducing the likelihood of data exposure, downtime, and regulatory impact.

AI-Driven Threat Detection Systems

AI-driven threat detection systems enhance real-time data security by identifying complex, multi-stage attack patterns that traditional rule-based approaches cannot detect. Rather than evaluating isolated events, these systems analyze relationships across user behavior, data access, system activity, and contextual signals to surface high-risk scenarios in real time.

By applying machine learning, deep learning, and natural language processing, AI-driven systems can detect subtle deviations that emerge across multiple data points, even when individual signals appear benign. This allows organizations to uncover sophisticated threats such as insider misuse, advanced persistent threats, lateral movement, and novel exploit techniques earlier in the attack lifecycle.

Once a potential threat is identified, automated prioritization and response mechanisms accelerate remediation. Actions such as isolating affected resources, restricting access, or alerting security teams can be triggered immediately, significantly reducing detection-to-response time compared to traditional security models. Over time, AI-driven systems continuously refine their detection models using new behavioral data and outcomes. This adaptive learning reduces false positives, improves accuracy, and enables a scalable security posture capable of responding to evolving threats in dynamic cloud and AI-driven environments.

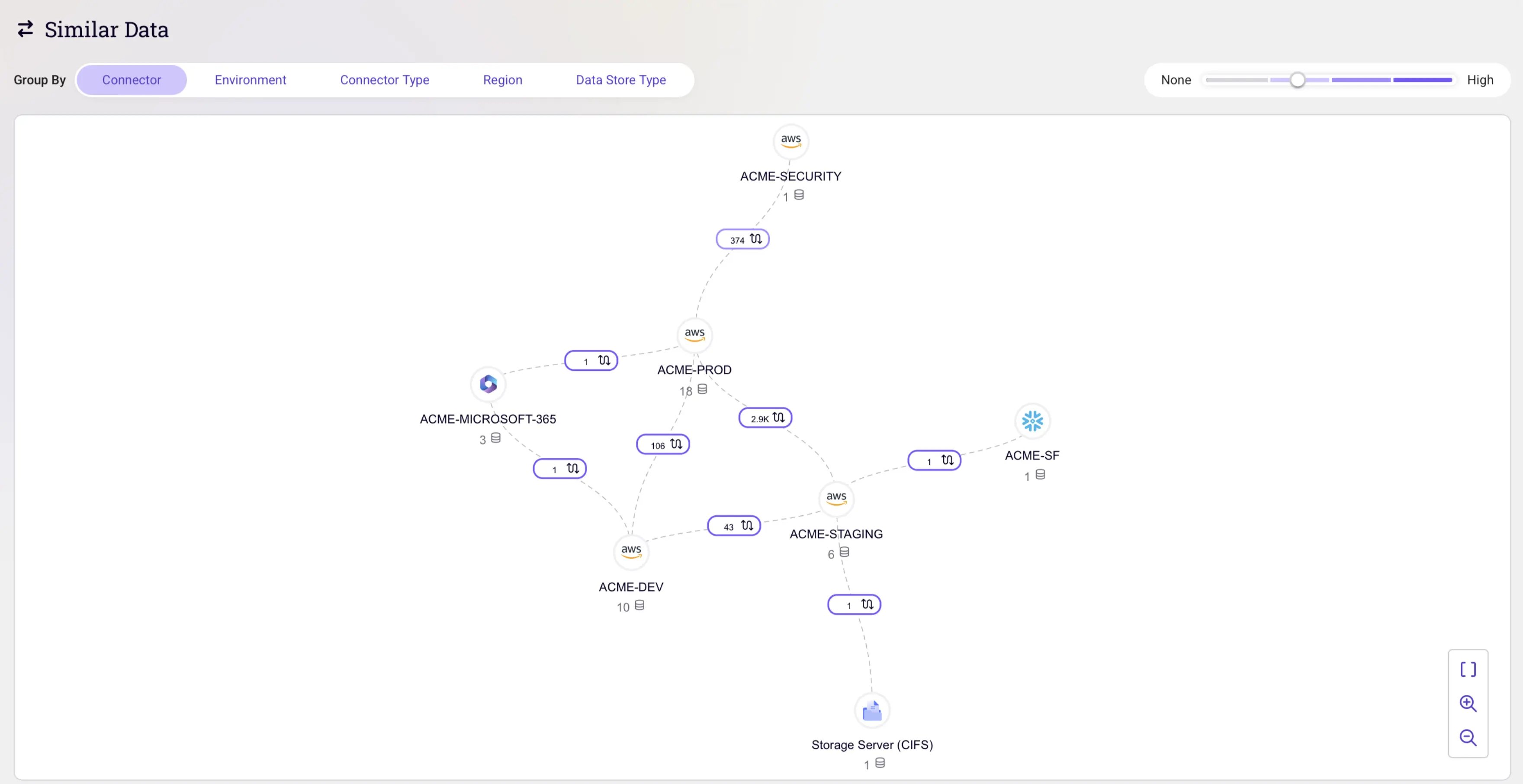

Tracking Data Movement and Data Lineage

Beyond identifying where sensitive data resides at a single point in time, modern data security platforms track data movement across its entire lifecycle. This visibility is critical for detecting when sensitive data flows between regions, across environments (such as from production to development), or into AI pipelines where it may be exposed to unauthorized processing.

By maintaining continuous data lineage and audit trails, these platforms monitor activity across cloud data stores, including ETL processes, database migrations, backups, and data transformations. Rather than relying on static snapshots, lineage tracking reveals dynamic data flows, showing how sensitive information is accessed, transformed, and relocated across the enterprise in real time.

In the AI era, tracking data movement is especially important as data is frequently duplicated and reused to train or power machine learning models. These capabilities allow organizations to detect when authorized data is connected to unauthorized large language models or external AI tools, commonly referred to as shadow AI, one of the fastest-growing risks to data security in 2026.

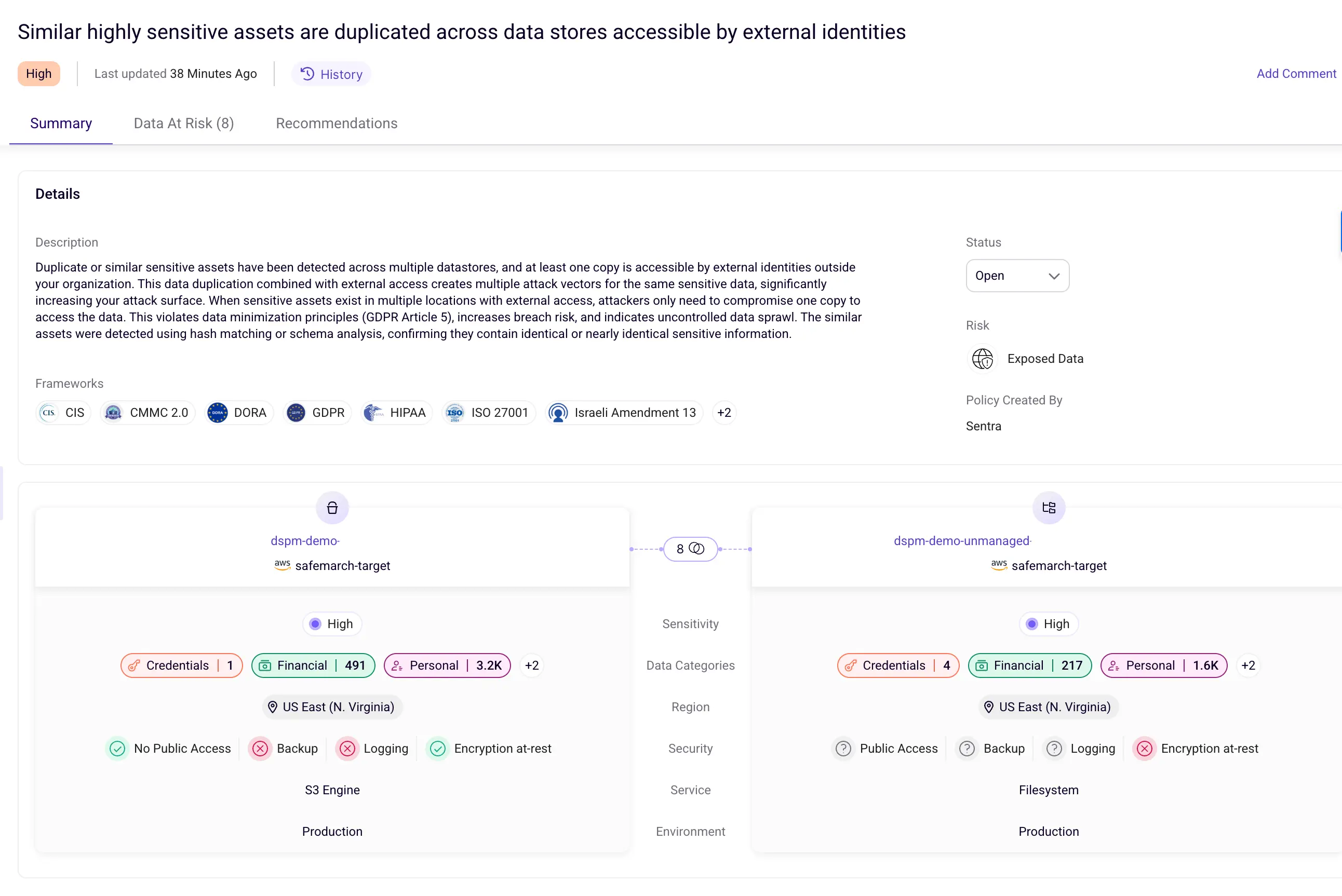

Identifying Toxic Combinations and Over-Permissioned Access

Toxic combinations occur when highly sensitive data is protected by overly broad or misconfigured access controls, creating elevated risk. These scenarios are especially dangerous because they place critical data behind permissive access, effectively increasing the potential blast radius of a security incident.

Advanced data security platforms identify toxic combinations by correlating data sensitivity with access permissions in real time. The process begins with automated data classification, using AI-powered techniques to identify sensitive information such as personally identifiable information (PII), financial data, intellectual property, and regulated datasets.

Once data is classified, access structures are analyzed to uncover over-permissioned configurations. This includes detecting global access groups (such as “Everyone” or “Authenticated Users”), excessive sharing permissions, and privilege creep where users accumulate access beyond what their role requires.

When sensitive data is found in environments with permissive access controls, these intersections are flagged as toxic risks. Risk scoring typically accounts for factors such as data sensitivity, scope of access, user behavior patterns, and missing safeguards like multi-factor authentication, enabling security teams to prioritize remediation effectively.

Detecting Shadow AI and Unauthorized Data Connections

Shadow AI refers to the use of unauthorized or unsanctioned AI tools and large language models that are connected to sensitive organizational data without security or IT oversight. As AI adoption accelerates in 2026, detecting these hidden data connections has become a critical component of modern data threat detection. Detection of shadow AI begins with continuous discovery and inventory of AI usage across the organization, including both approved and unapproved tools.

Advanced platforms employ multiple detection techniques to identify unauthorized AI activity, such as:

- Scanning unstructured data repositories to identify model files or binaries associated with unsanctioned AI deployments

- Analyzing email and identity signals to detect registrations and usage notifications from external AI services

- Inspecting code repositories for embedded API keys or calls to external AI platforms

- Monitoring cloud-native AI services and third-party model hosting platforms for unauthorized data connections

To provide comprehensive coverage, leading systems combine AI Security Posture Management (AISPM) with AI runtime protection. AISPM maps which sensitive data is being accessed, by whom, and under what conditions, while runtime protection continuously monitors AI interactions, such as prompts, responses, and agent behavior—to detect misuse or anomalous activity in real time.

When risky behavior is detected, including attempts to connect sensitive data to unauthorized AI models, automated alerts are generated for investigation. In high-risk scenarios, remediation actions such as revoking access tokens, blocking network connections, or disabling data integrations can be triggered immediately to prevent further exposure.

Real-Time Threat Monitoring and Response

Real-time threat monitoring and response form the operational core of modern data security, enabling organizations to detect suspicious activity and take action immediately as threats emerge. Rather than relying on periodic reviews or delayed investigations, these capabilities allow security teams to respond while incidents are still unfolding. Continuous monitoring aggregates signals from across the environment, including network activity, system logs, cloud configurations, and user behavior. This unified visibility allows systems to maintain up-to-date behavioral baselines and identify deviations such as unusual access attempts, unexpected data transfers, or activity occurring outside normal usage patterns.

Advanced analytics powered by AI and machine learning evaluate these signals in real time to distinguish benign anomalies from genuine threats. This approach is particularly effective at identifying complex attack scenarios, including insider misuse, zero-day exploits, and multi-stage campaigns that evolve gradually and evade traditional point-in-time detection.

When high-risk activity is detected, automated alerting and response mechanisms accelerate containment. Actions such as isolating affected resources, blocking malicious traffic, or revoking compromised credentials can be initiated within seconds, significantly reducing the window of exposure and limiting potential impact compared to manual response processes.

Sentra’s Approach to Real-Time Data Threat Detection

Sentra applies real-time data threat detection through a cloud-native platform designed to deliver continuous visibility and control without moving sensitive data outside the customer’s environment. By performing discovery, classification, and analysis in place across hybrid, private, and cloud environments, Sentra enables organizations to monitor data risk while preserving performance and privacy.

At the core of this approach is DataTreks™, which provides a contextual map of the entire data estate. DataTreks tracks where sensitive data resides and how it moves across ETL processes, database migrations, backups, and AI pipelines. This lineage-driven visibility allows organizations to identify risky data flows across regions, environments, and unauthorized destinations.

Sentra identifies toxic combinations by correlating data sensitivity with access controls in real time. The platform’s AI-powered classification engine accurately identifies sensitive information and maps these findings against permission structures to pinpoint scenarios where high-value data is exposed through overly broad or misconfigured access controls.

For shadow AI detection, Sentra continuously monitors data flows across the enterprise, including data sources accessed by AI tools and services. The system routinely audits AI interactions and compares them against a curated inventory of approved tools and integrations. When unauthorized connections are detected—such as sensitive data being fed into unapproved large language models (LLMs), automated alerts are generated with granular contextual details, enabling rapid investigation and remediation.

User Reviews (January 2026):

What Users Like:

- Data discovery capabilities and comprehensive reporting

- Fast, context-aware data security with reduced manual effort

- Ability to identify sensitive data and prioritize risks efficiently

- Significant improvements in security posture and compliance

Key Benefits:

- Unified visibility across IaaS, PaaS, SaaS, and on-premise file shares

- Approximately 20% reduction in cloud storage costs by eliminating shadow and ROT data

Conclusion: Real-Time Data Threat Detection in 2026

Real-time data threat detection has become an essential capability for organizations navigating the complex security challenges of the AI era. By combining continuous monitoring, AI-powered analytics, comprehensive data lineage tracking, and automated response capabilities, modern platforms enable enterprises to detect and neutralize threats before they result in data breaches or compliance violations.

As sensitive data continues to proliferate across hybrid environments and AI adoption accelerates, the ability to maintain real-time visibility and control over data security posture will increasingly differentiate organizations that thrive from those that struggle with persistent security incidents and regulatory challenges.

<blogcta-big>

How to Write an Effective Data Security Policy

How to Write an Effective Data Security Policy

Introduction: Why Writing Good Policies Matters

In modern cloud and AI-driven environments, having security policies in place is no longer enough. The quality of those policies directly shapes your ability to prevent data exposure, reduce noise, and drive meaningful response. A well-written policy helps to enforce real control and provides clarity in how to act. A poorly written one, on the other hand, fuels alert fatigue, confusion, or worse - blind spots.

This article explores how to write effective, low-noise, action-oriented security policies that align with how data is actually used.

What Is a Data Security Policy?

A data security policy is a set of rules that defines how your organization handles sensitive data. It specifies who can access what information, under what conditions, and what happens when those rules are violated. But here's the key difference: a good data security policy isn't just a document that sits in a compliance folder. It's an active control that detects risky behavior and triggers specific responses. While many organizations write policies that sound impressive but create endless alerts, effective policies target real risks and drive meaningful action. The goal isn't to monitor everything, it's to catch the activities that actually matter and respond quickly when they happen.

What Makes a Data Security Policy “Good”?

Before you begin drafting, ask yourself: what problem is this policy solving, and why does it matter?

A good data security policy isn’t just a technical rule sitting in a console, it’s a sensor for meaningful risk. It should define what activity you want to detect, under what conditions it should trigger, and who or what is in scope, so that it avoids firing on safe, expected scenarios.

Key characteristics of an effective policy:

- Clear intent: protects against a well-defined risk, not a vague category of threats.

- Actionable outcome: leads to a specific, repeatable response.

- Low noise: triggers only on unusual or risky patterns, not normal operations.

- Context-aware: accounts for business processes and expected data use.

💡 Tip: If you can’t explain in one sentence what you want to detect and what action should happen when it triggers, your policy isn’t ready for production.

Turning Risk Into Actionable Policy

Data security policies should always be grounded in real business risk, not just what’s technically possible to monitor. A strong policy targets scenarios that could genuinely harm the organization if left unchecked.

Questions to ask before creating a policy:

- What specific behavior poses a risk to our sensitive or regulated data?

- Who might trigger it, and why? Is it more likely to be malicious, accidental, or operational?

- What exceptions or edge cases should be allowed without generating noise?

- What systems will enforce it and who owns the response when it fires?

Instead of vague statements like “No access to PII”, write with precision:

“Block and alert on external sharing of customer PII from corporate cloud storage to any domain not on the approved partner list, unless pre-approved via the security exception process.”

Recommendations:

- Treat policies like code - start them in monitor-only mode.

- Test both sides: validate true positives (catching risky activity) and avoid false positives (triggering on normal behavior).

💡 Tip: The best policies are precise enough to detect real risks, but tested enough to avoid drowning teams in noise.

A Good Data Security Policy Should Drive Action

Policies are only valuable if they lead to a decision or action. Without a clear owner or remediation process, alerts quickly become noise. Every policy should generate an alert that leads to accountability.

Questions to ask:

- Who owns the alert?

- What should happen when it fires?

- How quickly should it be resolved?

💡 Tip: If no one is responsible for acting on a policy’s alerts, it’s not a policy — it’s background noise.

Don’t Ignore the Noise

When too many alerts fire, it’s tempting to dismiss them as an annoyance. But noisy policies are often a signal, not a mistake. Sometimes policies are too broad or poorly scoped. Other times, they point to deeper systemic risks, such as overly open sharing practices or misconfigured controls.

Recommendations:

- Investigate noisy policies before silencing them.

- Treat excess alerts as a clue to systemic risk.

💡 Tip: A noisy policy may be exposing the exact weakness you most need to fix.

Know When to Adjust or Retire a Policy

Policies must evolve as your organization, tools, and data change. A rule that made sense last year might be irrelevant or counterproductive today.

Recommendations:

- Continuously align policies with evolving risks.

- Track key metrics: how often it triggers, severity, and response actions.

- Optimize response paths so alerts reach the right owners quickly.

- Schedule quarterly or biannual reviews with both security and business stakeholders.

💡 Tip: The only thing worse than no policy is a stale one that everyone ignores.

Why Smart Policies Matter for Regulated Data

Data security policies aren’t just an internal safeguard, they are how compliance is enforced in practice. Regulations like GDPR, HIPAA, and PCI DSS require demonstrable control over sensitive data.

Poorly written policies generate alert fatigue, making it harder to detect real violations. Well-crafted ones reduce the risk of noncompliance, streamline audits, and improve breach response.

Recommendations:

- Map each policy directly to a specific regulatory requirement.

- Retire rules that create noise without reducing actual risk.

💡 Tip: If a policy doesn’t map to a regulation or a real risk, it’s adding effort without adding value.

Making Policy Creation Simple, Powerful, and Built for Results

An effective solution for policy creation should make it easy to get started, provide the flexibility to adapt to your unique environment, and give you the deep data context you need to make policies that actually work. It should streamline the process so you can move quickly without sacrificing control, compliance, or clarity.

Sentra is that solution. By combining intuitive policy building with deep data context, Sentra simplifies and strengthens the entire lifecycle of policy creation.

With Sentra, you can:

- Start fast with out-of-the-box, low-noise controls.

- Create custom policies without complexity.

- Leverage real-time knowledge of where sensitive data lives and who has access to it.

- Continuously tune for low noise with performance metrics.

- Understand which regulations you can adhere to

💡 Tip: The true value of a policy isn’t how often it triggers, it’s whether it consistently drives the right response.

Good Policies Start with Good Visibility

The best data security policies are written by teams who know exactly where sensitive data lives, how it moves, who can access it, and what creates risk. Without that visibility, policy writing becomes guesswork. With it, enforcement becomes simple, effective, and sustainable.

At Sentra, we believe policy creation should be driven by real data, not assumptions. If you’re ready to move from reactive alerts to meaningful control.

<blogcta-big>