How to Write an Effective Data Security Policy

Introduction: Why Writing Good Policies Matters

In modern cloud and AI-driven environments, having security policies in place is no longer enough. The quality of those policies directly shapes your ability to prevent data exposure, reduce noise, and drive meaningful response. A well-written policy helps to enforce real control and provides clarity in how to act. A poorly written one, on the other hand, fuels alert fatigue, confusion, or worse - blind spots.

This article explores how to write effective, low-noise, action-oriented security policies that align with how data is actually used.

What Is a Data Security Policy?

A data security policy is a set of rules that defines how your organization handles sensitive data. It specifies who can access what information, under what conditions, and what happens when those rules are violated. But here's the key difference: a good data security policy isn't just a document that sits in a compliance folder. It's an active control that detects risky behavior and triggers specific responses. While many organizations write policies that sound impressive but create endless alerts, effective policies target real risks and drive meaningful action. The goal isn't to monitor everything, it's to catch the activities that actually matter and respond quickly when they happen.

What Makes a Data Security Policy “Good”?

Before you begin drafting, ask yourself: what problem is this policy solving, and why does it matter?

A good data security policy isn’t just a technical rule sitting in a console, it’s a sensor for meaningful risk. It should define what activity you want to detect, under what conditions it should trigger, and who or what is in scope, so that it avoids firing on safe, expected scenarios.

Key characteristics of an effective policy:

- Clear intent: protects against a well-defined risk, not a vague category of threats.

- Actionable outcome: leads to a specific, repeatable response.

- Low noise: triggers only on unusual or risky patterns, not normal operations.

- Context-aware: accounts for business processes and expected data use.

💡 Tip: If you can’t explain in one sentence what you want to detect and what action should happen when it triggers, your policy isn’t ready for production.

Turning Risk Into Actionable Policy

Data security policies should always be grounded in real business risk, not just what’s technically possible to monitor. A strong policy targets scenarios that could genuinely harm the organization if left unchecked.

Questions to ask before creating a policy:

- What specific behavior poses a risk to our sensitive or regulated data?

- Who might trigger it, and why? Is it more likely to be malicious, accidental, or operational?

- What exceptions or edge cases should be allowed without generating noise?

- What systems will enforce it and who owns the response when it fires?

Instead of vague statements like “No access to PII”, write with precision:

“Block and alert on external sharing of customer PII from corporate cloud storage to any domain not on the approved partner list, unless pre-approved via the security exception process.”

Recommendations:

- Treat policies like code - start them in monitor-only mode.

- Test both sides: validate true positives (catching risky activity) and avoid false positives (triggering on normal behavior).

💡 Tip: The best policies are precise enough to detect real risks, but tested enough to avoid drowning teams in noise.

A Good Data Security Policy Should Drive Action

Policies are only valuable if they lead to a decision or action. Without a clear owner or remediation process, alerts quickly become noise. Every policy should generate an alert that leads to accountability.

Questions to ask:

- Who owns the alert?

- What should happen when it fires?

- How quickly should it be resolved?

💡 Tip: If no one is responsible for acting on a policy’s alerts, it’s not a policy — it’s background noise.

Don’t Ignore the Noise

When too many alerts fire, it’s tempting to dismiss them as an annoyance. But noisy policies are often a signal, not a mistake. Sometimes policies are too broad or poorly scoped. Other times, they point to deeper systemic risks, such as overly open sharing practices or misconfigured controls.

Recommendations:

- Investigate noisy policies before silencing them.

- Treat excess alerts as a clue to systemic risk.

💡 Tip: A noisy policy may be exposing the exact weakness you most need to fix.

Know When to Adjust or Retire a Policy

Policies must evolve as your organization, tools, and data change. A rule that made sense last year might be irrelevant or counterproductive today.

Recommendations:

- Continuously align policies with evolving risks.

- Track key metrics: how often it triggers, severity, and response actions.

- Optimize response paths so alerts reach the right owners quickly.

- Schedule quarterly or biannual reviews with both security and business stakeholders.

💡 Tip: The only thing worse than no policy is a stale one that everyone ignores.

Why Smart Policies Matter for Regulated Data

Data security policies aren’t just an internal safeguard, they are how compliance is enforced in practice. Regulations like GDPR, HIPAA, and PCI DSS require demonstrable control over sensitive data.

Poorly written policies generate alert fatigue, making it harder to detect real violations. Well-crafted ones reduce the risk of noncompliance, streamline audits, and improve breach response.

Recommendations:

- Map each policy directly to a specific regulatory requirement.

- Retire rules that create noise without reducing actual risk.

💡 Tip: If a policy doesn’t map to a regulation or a real risk, it’s adding effort without adding value.

Making Policy Creation Simple, Powerful, and Built for Results

An effective solution for policy creation should make it easy to get started, provide the flexibility to adapt to your unique environment, and give you the deep data context you need to make policies that actually work. It should streamline the process so you can move quickly without sacrificing control, compliance, or clarity.

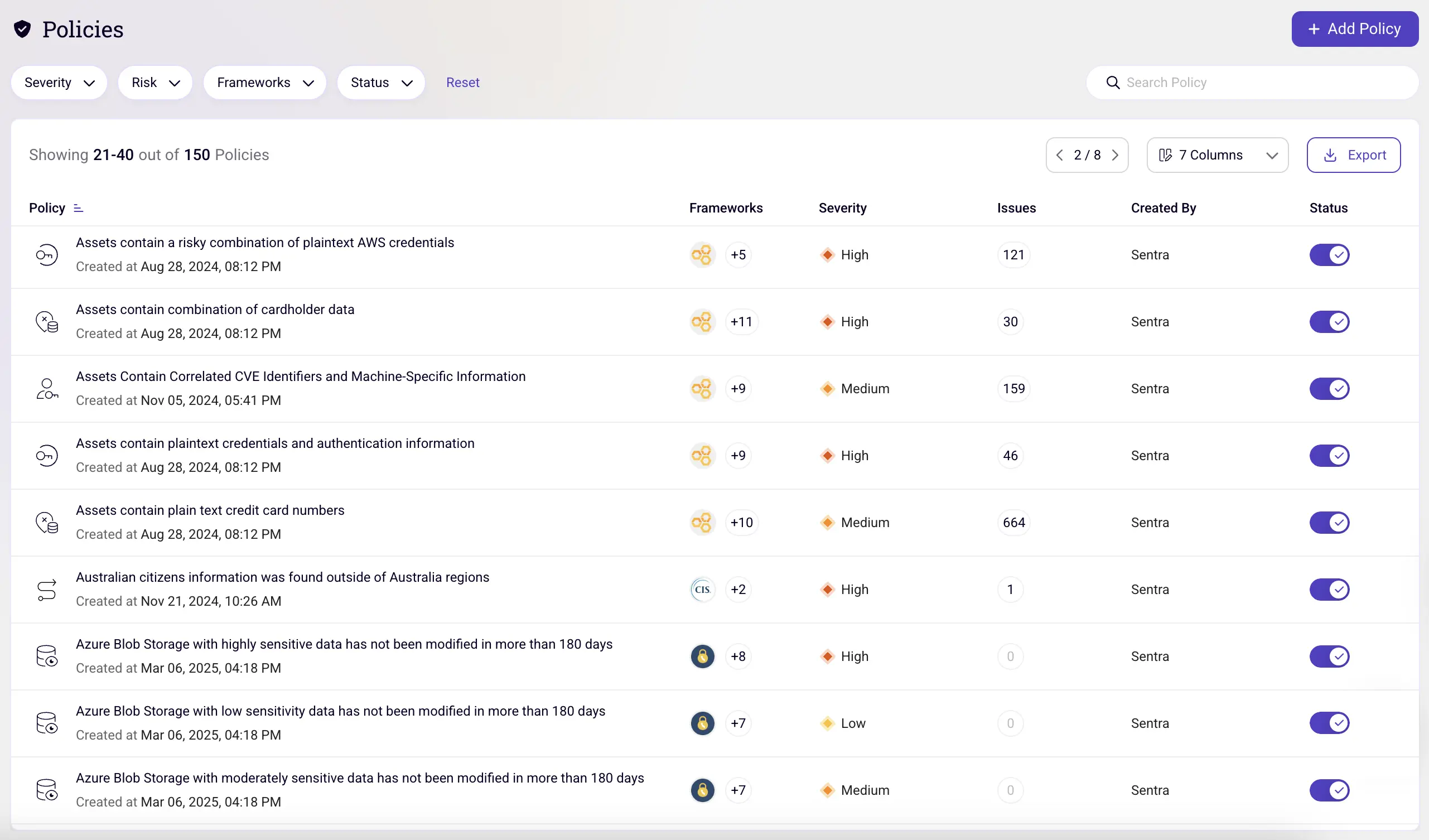

Sentra is that solution. By combining intuitive policy building with deep data context, Sentra simplifies and strengthens the entire lifecycle of policy creation.

With Sentra, you can:

- Start fast with out-of-the-box, low-noise controls.

- Create custom policies without complexity.

- Leverage real-time knowledge of where sensitive data lives and who has access to it.

- Continuously tune for low noise with performance metrics.

- Understand which regulations you can adhere to

💡 Tip: The true value of a policy isn’t how often it triggers, it’s whether it consistently drives the right response.

Good Policies Start with Good Visibility

The best data security policies are written by teams who know exactly where sensitive data lives, how it moves, who can access it, and what creates risk. Without that visibility, policy writing becomes guesswork. With it, enforcement becomes simple, effective, and sustainable.

At Sentra, we believe policy creation should be driven by real data, not assumptions. If you’re ready to move from reactive alerts to meaningful control.

<blogcta-big>